🗾ITmedia AI+ (日本)•Stalecollected in 2h

Meta's TRIBE v2 Predicts Brain Reactions to Media

💡Meta's new model simulates brain reactions to videos—game-changer for neuro-AI research

⚡ 30-Second TL;DR

What Changed

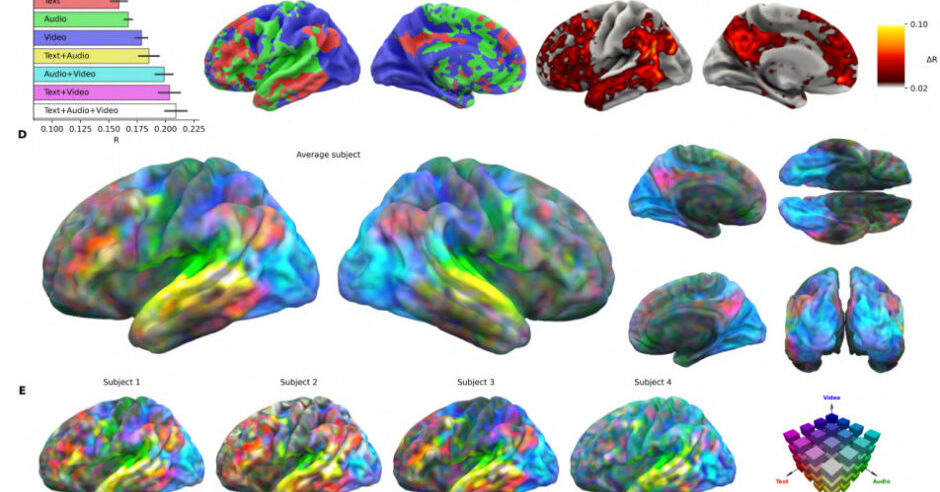

TRIBE v2 predicts brain responses to vision, audio, language inputs

Why It Matters

Advances AI in neuroscience, potentially accelerating brain research and HCI design. Could reduce ethical costs of human brain scanning studies.

What To Do Next

Download Meta's TRIBE v2 paper and test its brain prediction demos if available.

Who should care:Researchers & Academics

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •TRIBE v2 utilizes a self-supervised learning architecture trained on massive datasets of naturalistic stimuli, allowing it to map high-level semantic features to specific cortical regions without requiring task-specific fine-tuning.

- •The model demonstrates superior cross-modal generalization, successfully predicting neural responses to unseen video-audio combinations by leveraging shared latent representations of multimodal input.

- •Meta's research aims to reduce the reliance on invasive or costly fMRI/MEG data collection by providing a high-fidelity 'digital twin' of human sensory processing for rapid hypothesis testing.

🛠️ Technical Deep Dive

- •Architecture: Employs a transformer-based backbone that integrates pre-trained vision (e.g., DINOv2) and audio-language encoders to extract hierarchical features.

- •Training Objective: Uses a contrastive learning framework to align multimodal inputs with neural activity patterns recorded from large-scale neuroimaging datasets.

- •Decoding Mechanism: Implements a linear readout layer that maps the model's internal latent space to voxel-wise brain activity, optimized for spatial and temporal resolution.

- •Input Modalities: Processes synchronized video frames, audio waveforms, and text transcripts to simulate the multisensory integration occurring in the human brain.

🔮 Future ImplicationsAI analysis grounded in cited sources

TRIBE v2 will significantly reduce the number of human subjects required for early-stage cognitive neuroscience studies.

By enabling in-silico simulations, researchers can filter hypotheses and refine experimental designs before committing to expensive and time-consuming human neuroimaging trials.

The model will be integrated into future brain-computer interface (BCI) development pipelines.

The ability to accurately predict neural responses to external stimuli provides a foundational framework for improving the decoding accuracy of non-invasive BCI systems.

⏳ Timeline

2024-05

Meta releases initial research on multimodal foundation models for neural decoding.

2025-09

Meta publishes preliminary findings on the scalability of TRIBE architecture for sensory prediction.

2026-03

Meta officially unveils TRIBE v2 and the associated paper on in-silico neuroscience.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: ITmedia AI+ (日本) ↗