🛠️Meta Engineering Blog•Stalecollected in 31m

Meta's LLM-Scale Adaptive Ads Ranking

💡Meta's method to serve LLM-scale models in ads recsys—key inference efficiency hack.

⚡ 30-Second TL;DR

What Changed

Scales Ads Recommender to LLM-scale complexity

Why It Matters

Demonstrates production-scale LLM serving in recsys, offering blueprint for efficient large-model deployment in high-throughput apps. Influences ad tech and personalized recommendation strategies for AI builders.

What To Do Next

Read Meta Engineering Blog to implement adaptive ranking for your LLM recsys inference.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

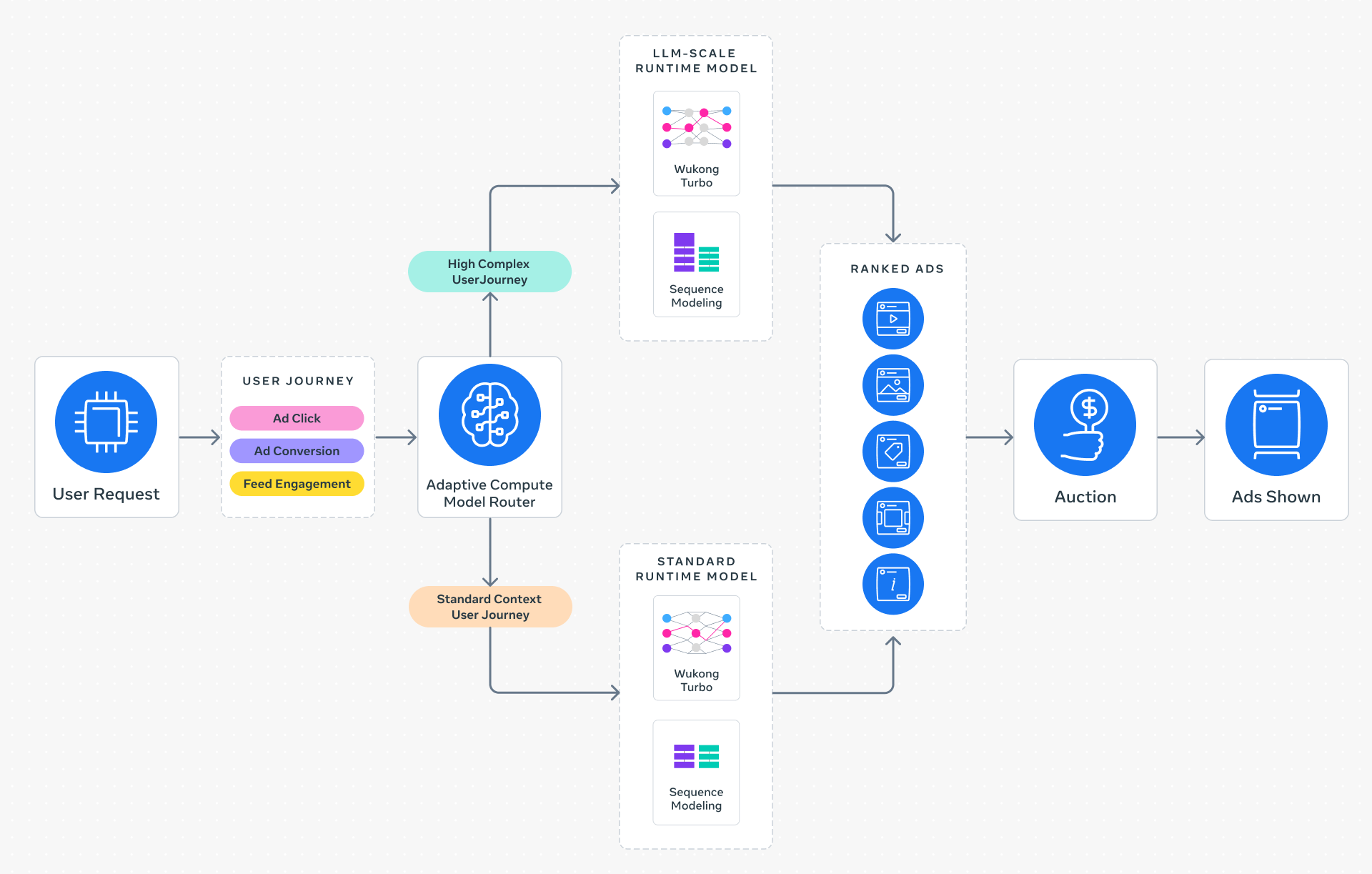

- •Meta utilizes a multi-stage ranking architecture where the Adaptive Ranking Model acts as a high-capacity 'late-stage' ranker, processing candidate sets refined by lighter-weight retrieval models.

- •The 'bending of the inference scaling curve' is achieved through dynamic compute allocation, where the model adjusts its active parameter count or depth based on the predicted value of the specific ad impression.

- •This architecture leverages specialized hardware acceleration, likely utilizing custom MTIA (Meta Training and Inference Accelerator) silicon to handle the increased memory bandwidth requirements of LLM-scale embedding tables.

📊 Competitor Analysis▸ Show

| Feature | Meta (Adaptive Ranking) | Google (Ads AI) | TikTok (Recommendation Engine) |

|---|---|---|---|

| Core Approach | LLM-scale adaptive compute | Transformer-based multi-task learning | Real-time interest graph modeling |

| Inference Strategy | Dynamic compute allocation | TPU-optimized batch inference | High-throughput stream processing |

| Benchmarking | Focus on conversion lift/ROAS | Focus on latency/query volume | Focus on engagement/retention |

🛠️ Technical Deep Dive

- Dynamic Compute Allocation: Implements a gating mechanism that routes complex queries to deeper model layers while routing simpler queries to shallower paths to minimize latency.

- Embedding Table Compression: Uses advanced quantization and embedding pruning techniques to fit massive user-interest feature sets into GPU/MTIA memory.

- Multi-Task Learning (MTL): The model is trained simultaneously on multiple objectives (e.g., click-through rate, conversion rate, and post-click value) to ensure holistic optimization.

- Inference Optimization: Utilizes custom kernels for sparse operations, which are critical for processing the high-cardinality categorical features inherent in ad recommendation systems.

🔮 Future ImplicationsAI analysis grounded in cited sources

Meta will transition all major ad ranking stages to LLM-based architectures by 2027.

The efficiency gains from adaptive compute make the performance-to-cost ratio of LLM-scale models viable for the entire ranking pipeline.

Ad-tech competitors will shift focus from model size to 'compute-per-query' efficiency metrics.

Meta's success in bending the scaling curve forces the industry to prioritize inference cost-optimization over raw parameter count.

⏳ Timeline

2022-05

Meta introduces the 'Recommendation AI' initiative to unify ML infrastructure across Facebook and Instagram.

2023-09

Meta announces the first generation of MTIA (Meta Training and Inference Accelerator) for internal workloads.

2024-04

Meta releases Llama 3, signaling a shift toward integrating generative AI capabilities into core product ranking systems.

2025-06

Meta deploys second-generation MTIA chips specifically optimized for high-bandwidth recommendation model inference.

2026-02

Meta begins rolling out the Adaptive Ranking Model to production ad traffic on Instagram.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: Meta Engineering Blog ↗