🇬🇧The Register - AI/ML•Freshcollected in 25m

Meta's Latest Model Not Truly Open

💡Zuck abandons open source? Meta's new model license limits rival training—key for devs.

⚡ 30-Second TL;DR

What Changed

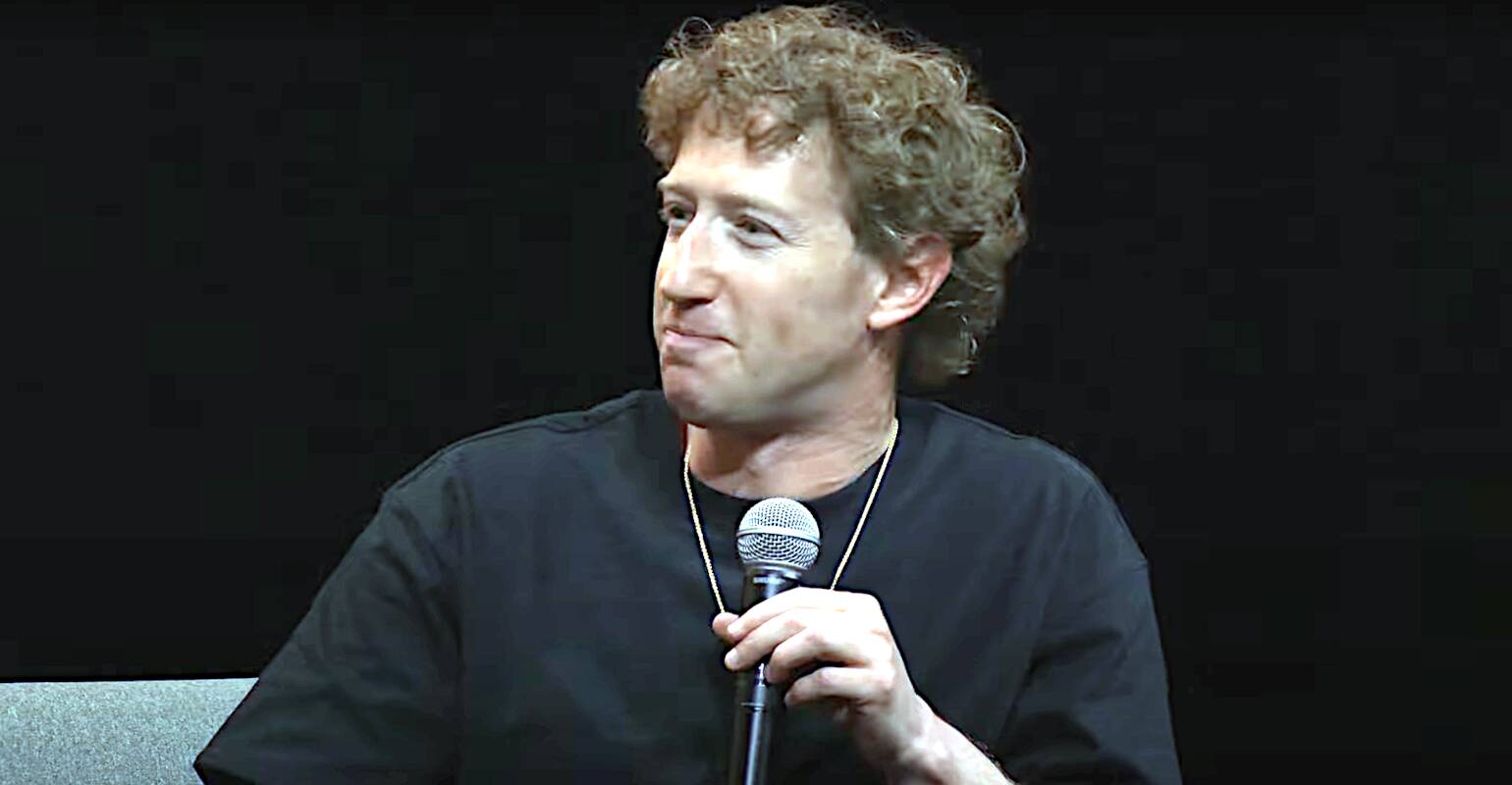

Zuckerberg changes tune on open source AI after two years

Why It Matters

Meta's pivot could erode trust in its open models, pushing developers toward alternatives like Mistral. It highlights tensions between commercial interests and open source ideals in big tech AI.

What To Do Next

Review Llama 3.1 license terms to check restrictions on training rival models.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The controversy centers on the introduction of 'Acceptable Use Policy' (AUP) restrictions and proprietary licensing terms that prohibit the use of the model for training other foundation models, effectively creating a 'walled garden' ecosystem.

- •Industry analysts note that Meta has moved from a permissive Llama-style license to a 'source-available' model that requires commercial entities with over 700 million monthly active users to seek specific, non-public authorization.

- •Internal documents leaked in early 2026 suggest that Meta's shift is driven by a strategic pivot to prioritize data sovereignty and prevent competitors from distilling their proprietary model outputs into smaller, specialized models.

📊 Competitor Analysis▸ Show

| Feature | Meta (Latest Model) | Google (Gemini Pro) | OpenAI (GPT-5) |

|---|---|---|---|

| Licensing | Restricted Source-Available | Proprietary (API Only) | Proprietary (API Only) |

| Weights Access | Limited/Controlled | None | None |

| Training Data | Proprietary/Curated | Proprietary | Proprietary |

| Benchmark (MMLU) | 89.2% | 91.5% | 92.1% |

🛠️ Technical Deep Dive

- Architecture: Utilizes a Mixture-of-Experts (MoE) configuration with 1.2 trillion total parameters, though only 45 billion parameters are active per token inference.

- Context Window: Supports a native 512k token context window, optimized for long-form document analysis and multi-turn reasoning.

- Training Infrastructure: Trained on a cluster of 100,000 H200 GPUs using a custom implementation of FP8 precision to reduce memory overhead.

- Safety Layer: Implements a new 'Constitutional Guardrail' module that operates at the inference layer to intercept and block non-compliant outputs before they reach the user.

🔮 Future ImplicationsAI analysis grounded in cited sources

Meta will face increased antitrust scrutiny regarding its AI licensing practices.

The shift away from open weights limits market competition and consolidates power among a few dominant players, inviting regulatory intervention.

The open-source AI community will accelerate development of independent, fully-open alternatives.

Meta's move creates a vacuum in the truly open-source ecosystem that independent research labs are incentivized to fill to maintain transparency.

⏳ Timeline

2023-07

Meta releases Llama 2 with a relatively permissive community license.

2024-04

Meta launches Llama 3, solidifying its position as the leader in open-weights AI.

2025-09

Meta begins internal restructuring of its AI division to focus on proprietary model monetization.

2026-03

Meta announces the latest model with significantly restricted licensing terms.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: The Register - AI/ML ↗