📊Bloomberg Technology•Freshcollected in 74m

Meta's $25B AI Infra Bond Sale

💡Meta's $25B AI infra bet fuels the arms race—watch for pricing ripples

⚡ 30-Second TL;DR

What Changed

Targeting $20-25B investment-grade bond sale

Why It Matters

Meta's massive fundraising underscores its commitment to AI leadership, potentially accelerating model training capabilities and intensifying competition in AI infra. This could lower barriers for AI practitioners via scaled resources.

What To Do Next

Monitor Meta's investor updates for AI capex breakdowns to forecast GPU supply shifts.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The bond issuance is structured across multiple tranches with varying maturities, reflecting Meta's strategy to lock in long-term capital to fund the massive capital expenditure requirements for its Llama model training clusters.

- •This debt financing move follows a broader trend among Big Tech firms to leverage low-interest debt markets to sustain aggressive AI infrastructure build-outs, specifically targeting the acquisition of high-end NVIDIA H100/B200 GPU clusters and custom silicon development.

- •Credit rating agencies have maintained Meta's strong investment-grade status, citing the company's robust free cash flow generation and relatively low debt-to-EBITDA ratio as key factors supporting the massive debt load.

📊 Competitor Analysis▸ Show

| Feature | Meta (AI Infra Debt) | Microsoft (AI Infra Debt) | Alphabet (AI Infra Debt) |

|---|---|---|---|

| Primary Use | Llama Training/Inference | Azure AI/OpenAI Partnership | Gemini/TPU Infrastructure |

| Debt Strategy | Opportunistic Bond Issuance | Massive Corporate Bond Sales | Conservative/Cash-heavy |

| Hardware Focus | Custom Silicon/NVIDIA | NVIDIA/Maia Chips | TPU/NVIDIA |

| Market Position | Aggressive Scaling | Ecosystem Integration | Vertical Integration |

🛠️ Technical Deep Dive

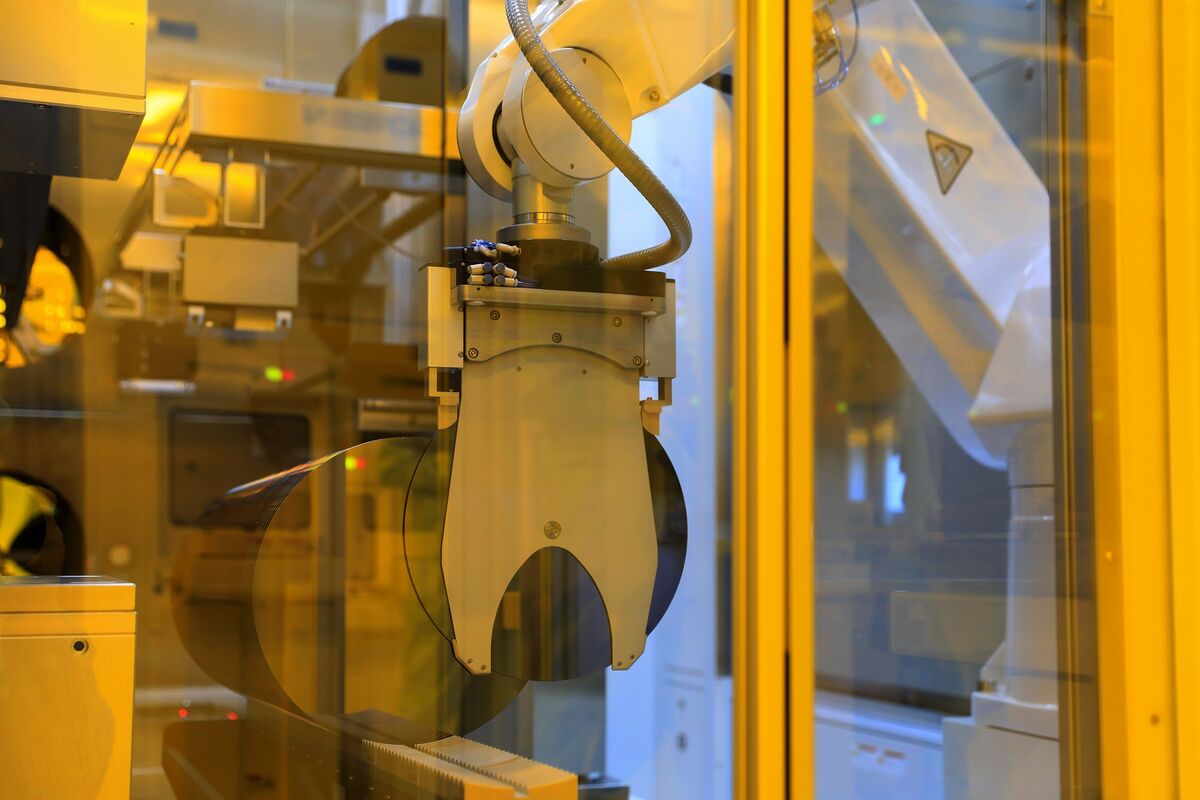

- Compute Infrastructure: The capital is earmarked for the deployment of massive GPU clusters, specifically targeting the expansion of the 'Grand Teton' open-source server platform.

- Networking: Investment in high-bandwidth, low-latency RDMA-based networking fabrics (RoCE) to support distributed training of models exceeding 1 trillion parameters.

- Data Center Design: Funding for liquid-cooled data center facilities optimized for high-density power consumption required by next-generation AI accelerators.

- Custom Silicon: Continued R&D and production scaling of Meta Training and Inference Accelerator (MTIA) chips to reduce reliance on third-party GPU vendors.

🔮 Future ImplicationsAI analysis grounded in cited sources

Meta's capital expenditure will exceed $40 billion annually by 2027.

The scale of this bond issuance indicates a long-term commitment to infrastructure spending that outpaces current operational cash flow allocations.

Meta will achieve greater inference cost efficiency through proprietary silicon.

The dedicated infrastructure funding allows for the accelerated deployment of MTIA chips, which are designed to lower the cost-per-token for Llama model inference.

⏳ Timeline

2023-02

Meta announces the formation of a dedicated Generative AI product team.

2023-07

Meta releases Llama 2, marking a shift toward open-weights model strategy.

2024-04

Meta launches Llama 3 and begins training on a massive 350k H100 GPU cluster.

2025-01

Meta reports record-high capital expenditures driven by AI data center construction.

2026-04

Meta announces $20-25 billion bond sale to fund AI infrastructure.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: Bloomberg Technology ↗