🔥36氪•Stalecollected in 86m

LiblibAI Unveils Agentic AI Video Platform

💡Agent-native video platform launches with 100k+ day1 visits—build next-gen AI content tools

⚡ 30-Second TL;DR

What Changed

Launch: LibTV AI video platform by LiblibAI

Why It Matters

LibTV pioneers agentic video workflows, potentially accelerating AI content creation tools and lowering barriers for automated video production in apps.

What To Do Next

Register on LibTV and experiment with agent scheduling for video pipelines.

Who should care:Creators & Designers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •LiblibAI, originally a prominent Chinese community platform for Stable Diffusion models and LoRA sharing, is pivoting from a model-hosting hub to an end-to-end generative video production ecosystem.

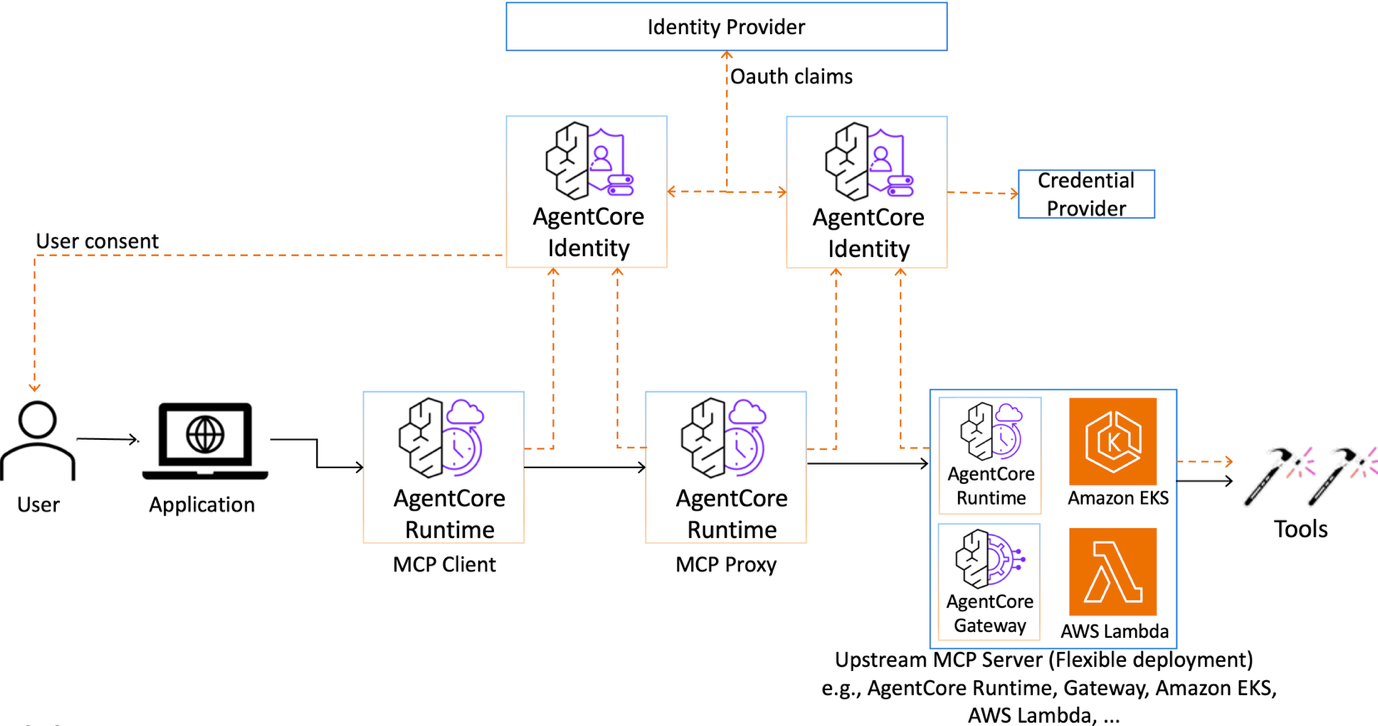

- •The 'Agentic' architecture utilizes a proprietary orchestration layer that allows autonomous agents to chain together multi-step video generation tasks, such as script-to-video, character consistency maintenance, and automated editing, without human intervention.

- •The platform's infrastructure is optimized for high-concurrency inference, leveraging a distributed GPU cluster specifically tuned for the low-latency requirements of agent-driven video generation workflows.

📊 Competitor Analysis▸ Show

| Feature | LiblibAI (LibTV) | Kling AI | Runway Gen-3 |

|---|---|---|---|

| Primary Focus | Agent-orchestrated workflows | High-fidelity cinematic generation | Creative suite/Professional editing |

| Agent Integration | Native API-first for agents | Limited/Experimental | Plugin-based |

| Pricing Model | Freemium/Token-based | Subscription/Credit-based | Subscription/Credit-based |

| Core Strength | Workflow automation | Motion realism | Artistic control |

🛠️ Technical Deep Dive

- •Architecture: Utilizes a multi-agent framework where specialized agents handle distinct tasks: 'Director Agent' (scripting/storyboarding), 'Visual Agent' (image/video generation), and 'Editor Agent' (post-production/sequencing).

- •API Integration: Exposes a RESTful API that allows external AI agents to trigger video generation pipelines, supporting asynchronous job status polling and webhook callbacks.

- •Model Foundation: Built upon a hybrid architecture that integrates open-source diffusion models (Stable Diffusion/Flux) with proprietary temporal consistency modules for video stability.

- •Inference Optimization: Implements custom CUDA kernels for faster video frame interpolation and memory-efficient VRAM management to handle long-form video generation requests.

🔮 Future ImplicationsAI analysis grounded in cited sources

LiblibAI will transition to a B2B SaaS model for enterprise marketing automation.

The focus on agentic workflows suggests a shift toward providing automated video production tools for corporate marketing teams rather than just serving individual hobbyists.

The platform will integrate with major Chinese e-commerce platforms by Q4 2026.

The agentic capability is highly optimized for generating product-focused video content, which is a high-demand use case for platforms like Taobao and Douyin.

⏳ Timeline

2023-05

LiblibAI launches as a community-driven platform for Stable Diffusion model sharing.

2024-09

LiblibAI expands infrastructure to support cloud-based model training and inference.

2026-03

LiblibAI launches LibTV, introducing agentic video generation capabilities.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 36氪 ↗