🗾ITmedia AI+ (日本)•Stalecollected in 83m

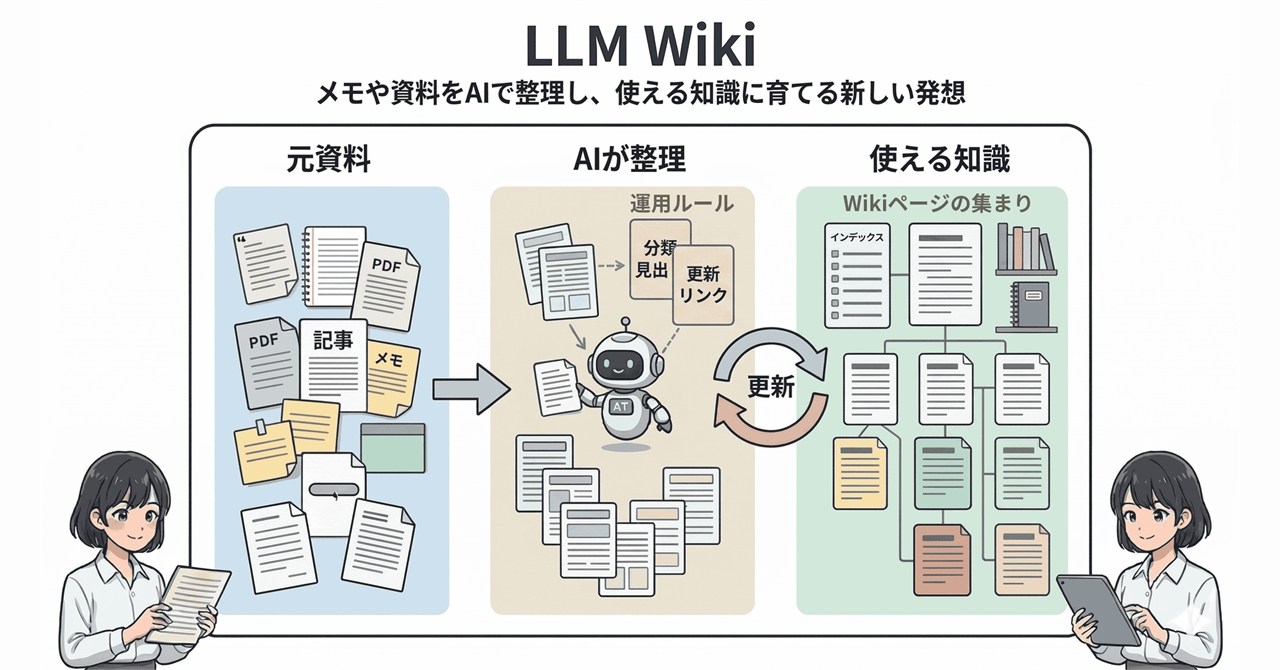

Karpathy's LLM Wiki Organizes Notes into AI Knowledge

💡Karpathy's 5k-star LLM Wiki: AI turns notes into knowledge base, unlike RAG (explore tools now)

⚡ 30-Second TL;DR

What Changed

Proposed by AI expert Karpathy with 5k+ GitHub stars

Why It Matters

LLM Wiki offers a novel approach to personal knowledge management, potentially boosting productivity for AI devs handling scattered notes. It challenges RAG paradigms, fostering community-driven innovations in LLM apps.

What To Do Next

Clone Karpathy's LLM Wiki GitHub repo and test organizing your research notes into a personal wiki.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The 'LLM Wiki' concept is heavily influenced by Andrej Karpathy's 'Llama2.c' and 'minGPT' philosophy, emphasizing local, lightweight, and transparent implementations of LLM-based knowledge management rather than relying on massive, opaque cloud-based SaaS platforms.

- •Unlike standard RAG (Retrieval-Augmented Generation) which performs real-time vector similarity search on raw chunks, the LLM Wiki approach focuses on an 'indexing-as-synthesis' pipeline that uses LLMs to rewrite, interlink, and structure raw notes into a coherent graph-based knowledge base before query time.

- •The project leverages local embedding models (such as those from the Hugging Face ecosystem) and local vector databases (like ChromaDB or FAISS) to ensure data privacy, allowing users to maintain a 'second brain' entirely offline.

📊 Competitor Analysis▸ Show

| Feature | LLM Wiki (Karpathy-inspired) | Obsidian (with Smart Connections) | Notion AI |

|---|---|---|---|

| Architecture | Local-first, Graph-based | Local-first, Plugin-based | Cloud-native, SaaS |

| Pricing | Open Source (Free) | Freemium (Plugin costs vary) | Subscription |

| Benchmarks | High transparency/Privacy | High extensibility | High ease-of-use |

🛠️ Technical Deep Dive

- Pipeline Architecture: Utilizes a multi-stage pipeline: (1) Ingestion of markdown/text files, (2) Semantic chunking with overlap, (3) LLM-driven summarization and entity extraction, (4) Vector embedding generation, and (5) Graph construction for inter-document linking.

- Embedding Models: Typically defaults to sentence-transformers (e.g., all-MiniLM-L6-v2) for local execution.

- Knowledge Graph Integration: Uses LLMs to identify relationships between entities across disparate notes, creating a structured JSON or graph database format (e.g., Neo4j or simple adjacency lists) to improve retrieval context beyond simple vector similarity.

- Context Window Management: Implements recursive summarization to fit large note collections into the context window of smaller, local LLMs (e.g., Llama 3 or Mistral variants).

🔮 Future ImplicationsAI analysis grounded in cited sources

Personal Knowledge Management (PKM) will shift from manual tagging to automated semantic graph generation.

The automation of inter-document linking reduces the cognitive load of maintaining a wiki, making structured knowledge bases accessible to non-technical users.

Local-first AI tools will capture significant market share from cloud-based note-taking platforms.

Growing concerns over data privacy and the ability to run high-performance models locally on consumer hardware favor the LLM Wiki architecture.

⏳ Timeline

2023-09

Karpathy publishes 'Intro to Large Language Models' video, sparking interest in local LLM applications.

2024-02

Initial community-driven GitHub repositories emerge implementing Karpathy's concepts for local knowledge indexing.

2025-11

LLM Wiki project reaches the 5,000 GitHub star milestone following integration with popular local LLM runners.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: ITmedia AI+ (日本) ↗