💰钛媒体•Freshcollected in 28m

Jensen Huang Starting to Worry

💡Nvidia's 'shovel seller' era ending? Strategy shift impacts AI infra costs

⚡ 30-Second TL;DR

What Changed

Jensen Huang potentially anxious over Nvidia's position

Why It Matters

Signals potential vulnerabilities in Nvidia's moat, urging AI firms to diversify compute sources. Practitioners should monitor GPU pricing volatility.

What To Do Next

Benchmark alternative inference chips like Grok chips against Nvidia A100 for cost savings.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Nvidia is facing increasing pressure from hyperscalers like Google, Amazon, and Microsoft, who are aggressively developing custom silicon (ASICs) such as TPUs and Inferentia to reduce reliance on Nvidia's high-cost H100/B200 GPUs.

- •The rise of 'Small Language Models' (SLMs) and edge AI is shifting demand away from massive, centralized GPU clusters toward more power-efficient, specialized hardware that Nvidia's current monolithic GPU architecture is not optimized for.

- •Regulatory headwinds, particularly tightening US export controls on high-end AI chips to China, have forced Nvidia to create 'de-powered' variants, eroding their competitive moat and allowing local Chinese alternatives to gain market share.

📊 Competitor Analysis▸ Show

| Feature | Nvidia (Blackwell) | Google (TPU v6) | AWS (Trainium2) |

|---|---|---|---|

| Primary Focus | General Purpose AI Training/Inference | Optimized for Transformer models | Optimized for LLM training/inference |

| Pricing Model | High-margin hardware sales | Internal use / Cloud TPU service | Cloud-only (EC2 instances) |

| Key Advantage | CUDA ecosystem dominance | Deep integration with JAX/TensorFlow | Cost-efficiency for AWS workloads |

🛠️ Technical Deep Dive

- •Nvidia's Blackwell architecture utilizes a two-reticle GPU design connected via a 10 TB/s chip-to-chip link to overcome physical manufacturing limits.

- •The shift toward 'disaggregated' compute is forcing Nvidia to focus more on NVLink Switch systems and InfiniBand networking, moving the bottleneck from raw compute to data interconnect bandwidth.

- •Emerging competition is leveraging RISC-V architectures and domain-specific accelerators that prioritize energy efficiency (performance-per-watt) over the raw FP8/FP16 throughput that defines Nvidia's current flagship products.

🔮 Future ImplicationsAI analysis grounded in cited sources

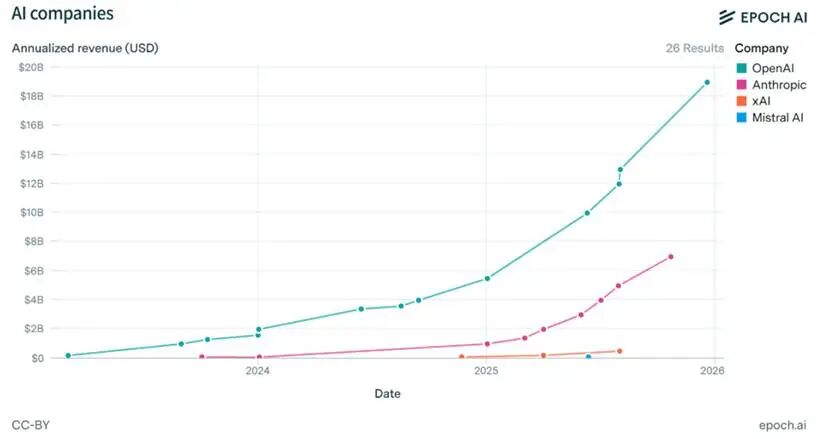

Nvidia's gross margins will compress below 70% by 2027.

Increased competition from custom silicon and the commoditization of inference hardware will force Nvidia to lower prices to maintain market share.

Software-defined hardware will become the primary competitive differentiator.

As hardware performance plateaus, the ability to optimize AI models via proprietary software stacks like CUDA will be challenged by open-source alternatives like Triton and MLIR.

⏳ Timeline

2022-11

Launch of ChatGPT triggers massive global demand for Nvidia A100/H100 GPUs.

2023-10

US government updates export controls, restricting high-end AI chip sales to China.

2024-03

Nvidia announces the Blackwell architecture at GTC 2024.

2025-06

Major cloud providers report significant increases in internal custom silicon deployment.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗