🕸️LangChain Blog•Freshcollected in 22m

Human Judgment in Agent Loops

💡Unlock tacit knowledge to supercharge LangChain agents

⚡ 30-Second TL;DR

What Changed

AI agents mirror team knowledge for optimal performance

Why It Matters

Emphasizes human-in-the-loop for agent reliability and alignment with expertise. Helps practitioners build more effective, knowledge-infused agents. Bridges gap between documented and tacit insights.

What To Do Next

Add human feedback loops to your LangChain agents via LangGraph callbacks.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

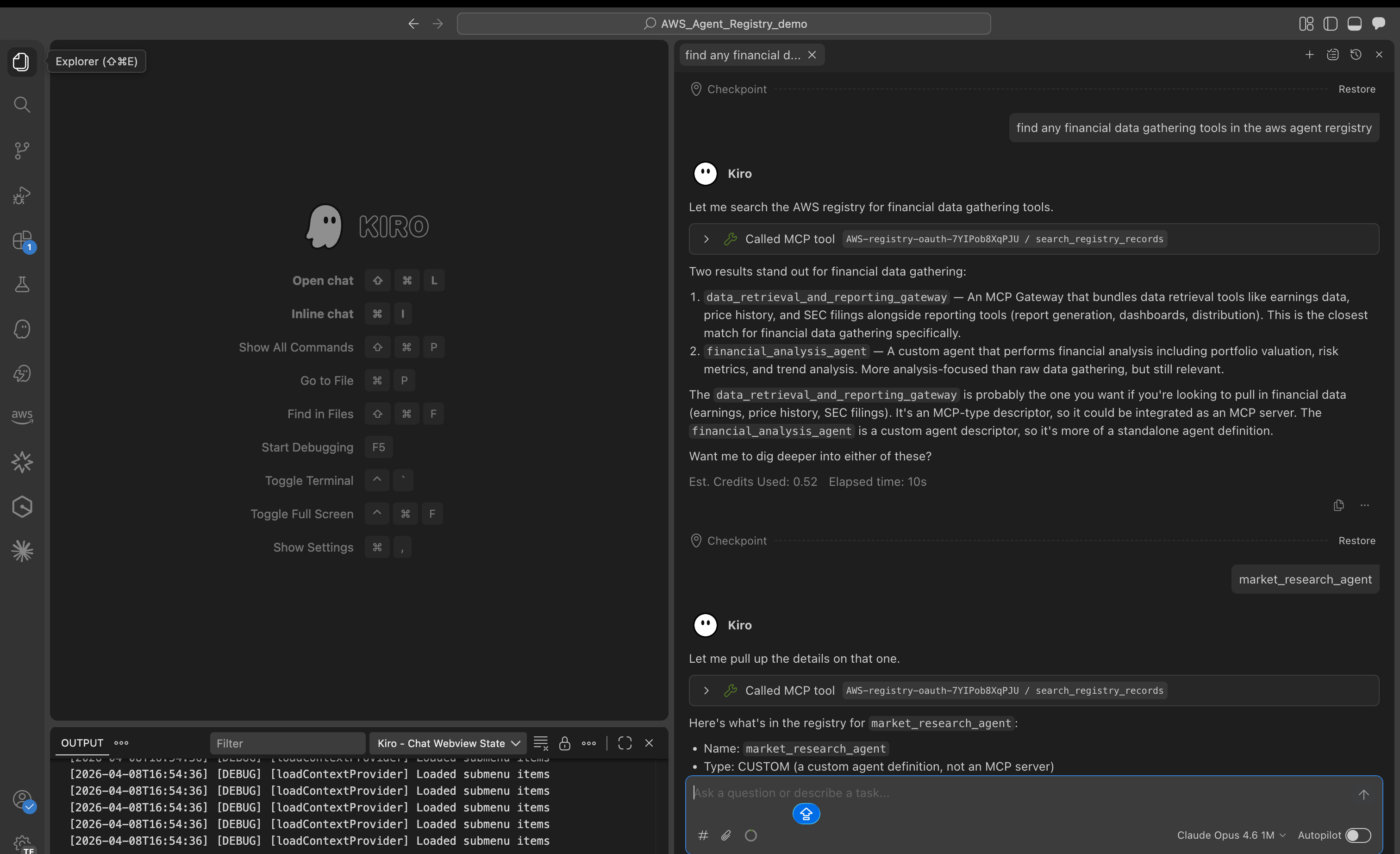

- •Human-in-the-loop (HITL) architectures are increasingly utilizing 'Human-as-a-Judge' frameworks to mitigate LLM hallucinations and alignment drift in autonomous agent workflows.

- •The integration of tacit knowledge into agentic systems is being facilitated by RAG (Retrieval-Augmented Generation) pipelines that prioritize semantic search over structured databases to capture nuanced organizational context.

- •LangChain's approach emphasizes 'Human-in-the-loop' as a design pattern rather than an afterthought, enabling agents to pause and request human intervention for high-stakes decision-making or ambiguity resolution.

📊 Competitor Analysis▸ Show

| Feature | LangChain (LangGraph) | Microsoft AutoGen | CrewAI |

|---|---|---|---|

| Human-in-the-loop | Native, state-based persistence | Event-driven, manual intervention | Task-based delegation |

| Pricing | Open Source (Core) | Open Source | Open Source / Managed |

| Benchmarks | High flexibility/customization | High multi-agent orchestration | High ease-of-use/abstraction |

🛠️ Technical Deep Dive

- •Implementation relies on state persistence layers (e.g., Checkpointers) that allow the agent to pause execution, store the current state, and resume after human feedback.

- •Utilizes graph-based state machines where human intervention nodes act as conditional edges, preventing the agent from proceeding until a specific state transition is authorized.

- •Supports 'Human-in-the-loop' via interrupt points in the execution graph, allowing developers to inject human-provided data or corrections directly into the agent's memory context.

🔮 Future ImplicationsAI analysis grounded in cited sources

Human-in-the-loop will become the standard for enterprise-grade agent deployment.

Regulatory and operational risk requirements will mandate human oversight for autonomous agents handling sensitive organizational data.

Tacit knowledge extraction will shift from manual documentation to automated 'experience capture'.

Agents will increasingly be trained to observe and log expert workflows to codify tacit knowledge without requiring explicit documentation.

⏳ Timeline

2022-10

LangChain library launched to simplify LLM application development.

2024-01

Introduction of LangGraph to support cyclic, stateful agent workflows.

2024-05

Release of LangGraph's persistence and human-in-the-loop capabilities.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: LangChain Blog ↗