🗾ITmedia AI+ (日本)•Stalecollected in 54m

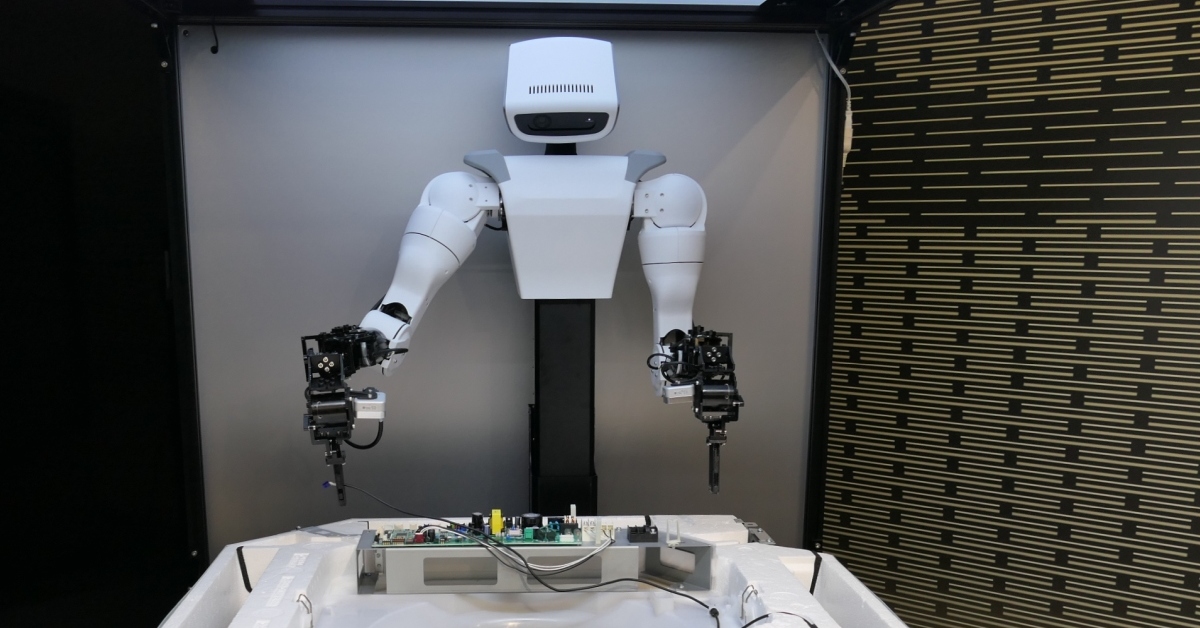

Hitachi Launches IWIM Physical AI Robots

💡Hitachi's IWIM: self-learning robots for real-world tasks

⚡ 30-Second TL;DR

What Changed

Hitachi announces IWIM Physical AI model

Why It Matters

Advances embodied AI for industrial robotics, potentially accelerating automation in manufacturing. Positions Hitachi as key player in physical AI applications.

What To Do Next

Visit Hitachi's Physical AI Experience Studio preview to test IWIM prototypes.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •IWIM (Integrated World-Interaction Model) leverages a multimodal architecture that fuses sensor data with large-scale environmental understanding to reduce the need for extensive pre-programming in unstructured factory environments.

- •The technology utilizes a 'human-in-the-loop' reinforcement learning framework, allowing robots to refine movement precision based on real-time feedback from human operators during initial deployment phases.

- •Hitachi is positioning the Physical AI Experience Studio as a B2B co-creation hub, specifically targeting the integration of IWIM into existing legacy manufacturing systems rather than requiring complete facility overhauls.

📊 Competitor Analysis▸ Show

| Feature | Hitachi IWIM | Fanuc (AI-based Robotics) | ABB (OmniCore/AI) |

|---|---|---|---|

| Core Focus | On-site self-learning/adaptation | High-speed precision/repeatability | Modular automation/software-defined |

| Learning Model | Multimodal Physical AI | Reinforcement learning (specific tasks) | Digital twin-driven optimization |

| Deployment | Retrofit/Legacy integration | Factory-floor optimized | System-wide orchestration |

🛠️ Technical Deep Dive

- Architecture: Employs a transformer-based foundation model trained on both synthetic simulation data and real-world sensor telemetry.

- Sensor Fusion: Integrates high-frequency LiDAR, depth-sensing cameras, and tactile force sensors to map physical constraints in real-time.

- Learning Mechanism: Implements 'Action Optimization' via a policy-gradient reinforcement learning loop that adjusts joint torque and trajectory planning without requiring manual code updates.

- Edge Computing: The model is optimized for local inference on edge-AI controllers to ensure sub-millisecond latency in safety-critical environments.

🔮 Future ImplicationsAI analysis grounded in cited sources

Hitachi will transition from a hardware-centric robotics provider to a software-as-a-service (SaaS) model for industrial automation.

The focus on self-learning models suggests a shift toward recurring revenue through model updates and performance optimization services.

IWIM will significantly reduce the 'deployment-to-production' time for custom robotic tasks by at least 40%.

By replacing manual programming with self-learning, the time-intensive phase of fine-tuning robot motion for specific, non-standard tasks is drastically shortened.

⏳ Timeline

2024-05

Hitachi announces strategic shift toward 'Physical AI' to bridge the gap between digital models and physical robotics.

2025-02

Hitachi unveils initial research results on multimodal sensor fusion for industrial robots.

2026-03

Official launch of IWIM model and preview of the Physical AI Experience Studio.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: ITmedia AI+ (日本) ↗