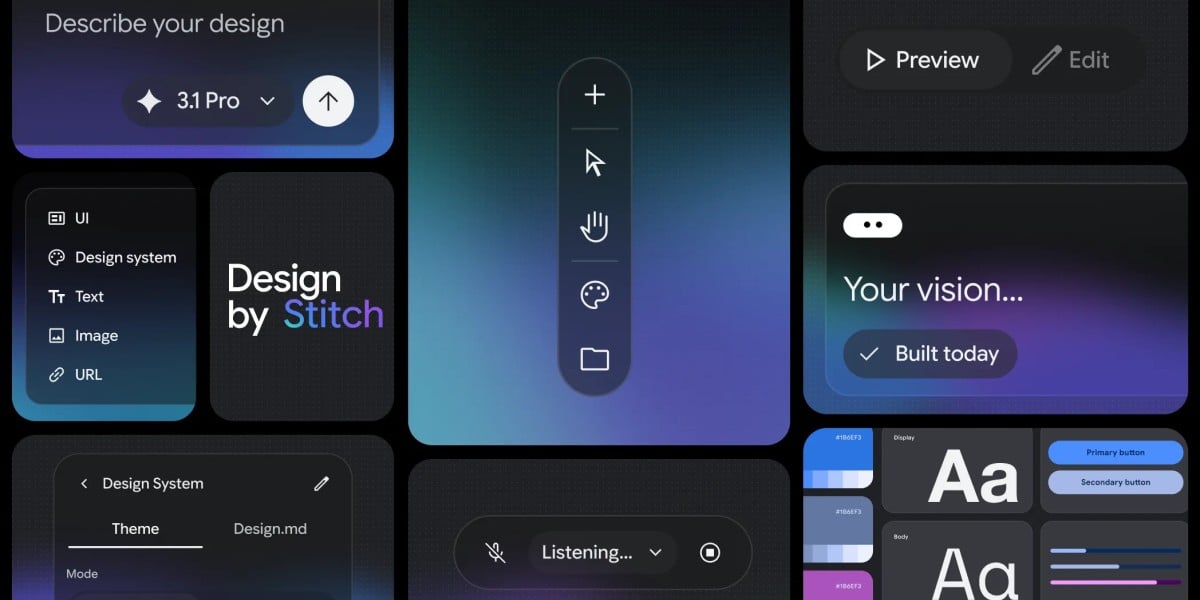

Google Stitch Adds Voice UI Design

💡Google's voice AI tool turns shouts into UIs—fast prototyping for builders.

⚡ 30-Second TL;DR

What Changed

Stitch supports voice input for shouting UI design intents

Why It Matters

This tool could accelerate UI prototyping for designers and developers using natural language. It lowers the barrier for non-experts to create interfaces but highlights ongoing challenges in AI-generated code reliability. Adoption may influence future AI design workflows at Google.

What To Do Next

Test Google's Stitch tool with voice commands to prototype your next UI design.

🧠 Deep Insight

Web-grounded analysis with 7 cited sources.

🔑 Enhanced Key Takeaways

- •Stitch generates interactive prototypes and complete user flows from designs, allowing instant preview of app journeys with AI-suggested next screens.[1][2][5]

- •Users can upload images, sketches, screenshots, or code snippets to the infinite canvas, which the AI uses as context for generating and refining designs.[1][2][3]

- •Design systems can be extracted from websites or interfaces and applied project-wide via a DESIGN.md file, ensuring consistent colors, typography, and components.[2]

- •Exports include editable Figma layers with auto-layout, production-ready HTML/CSS or React code, supporting seamless handoff to development.[3]

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

📎 Sources (7)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

- nxcode.io — Google Stitch Complete Guide Vibe Design 2026

- muz.li — Google Just Introduced Vibe Design Heres What It Means for Ui Designers

- almcorp.com — Google Stitch Complete Guide AI Ui Design Tool 2026

- youtube.com — Watch

- Google Blog — Stitch AI Ui Design

- stitch.withgoogle.com

- uxplanet.org — Google Stitch for Ui Design 544cf8b42d52

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: The Register - AI/ML ↗