Google Stitch Beta: Sketches to React Code in Seconds

💡Google's Stitch turns sketches into React code in seconds—boost UI prototyping speed.

⚡ 30-Second TL;DR

What Changed

Google publicly releases Stitch beta in Labs

Why It Matters

Stitch accelerates UI prototyping for developers, bridging design and code workflows. By leveraging Gemini, it lowers barriers for rapid iteration in app development.

What To Do Next

Join Google Labs and upload a UI sketch to Stitch beta for instant React code generation.

🧠 Deep Insight

Web-grounded analysis with 8 cited sources.

🔑 Enhanced Key Takeaways

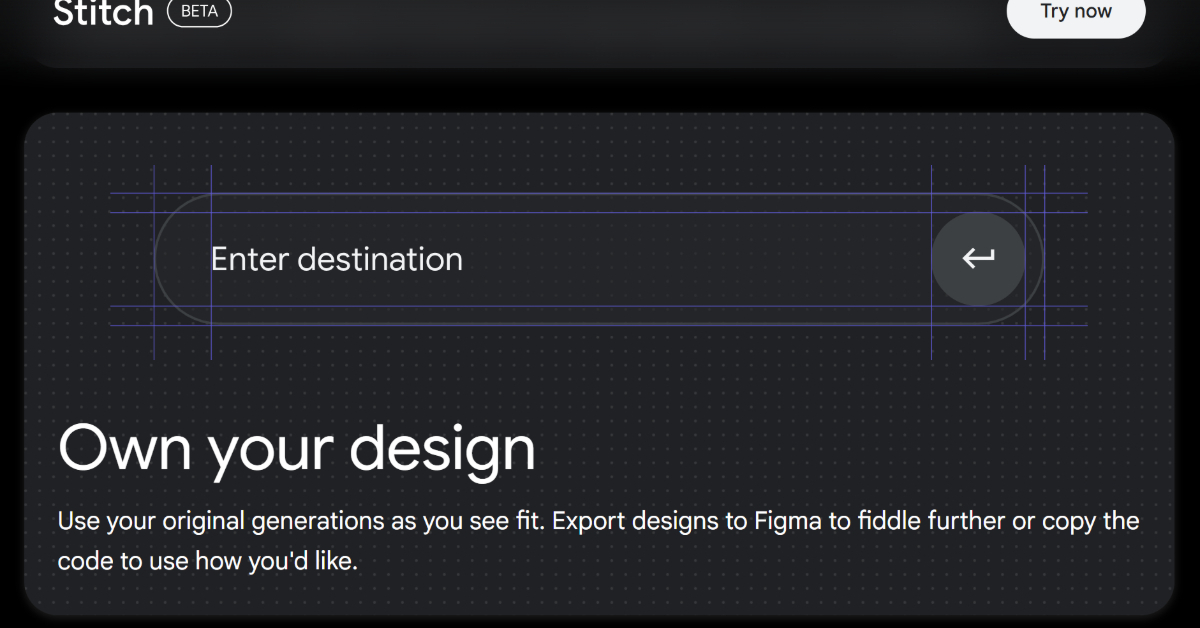

- •Google Stitch launched at Google I/O 2025 on May 20, 2025, as an experimental tool from Google Labs leveraging Gemini's multimodal capabilities for both design generation and code output[1].

- •The platform supports advanced prototyping features including automatic interaction detection (buttons, dropdowns) and conditional logic for user flows, with AI analyzing screen layouts to determine optimal interaction patterns[4].

- •Stitch enables rapid design iteration through point-and-edit functionality and multi-screen app flow design within a single project, allowing designers to modify individual elements via prompts without rebuilding entire layouts[4].

📊 Competitor Analysis▸ Show

| Feature | Google Stitch | Figma | UXPin | Zeplin |

|---|---|---|---|---|

| AI-Powered UI Generation | Yes | Limited | Limited | No |

| Text-to-Design | Yes | No | No | No |

| Image/Sketch-to-UI | Yes | No | No | No |

| Code Export (HTML/CSS/React) | Yes | Limited | Yes | Limited |

| Figma Integration | Direct export | Native | Yes | Yes |

| Real-time Team Collaboration | No | Yes | Yes | Yes |

| Prototyping with AI Interactions | Yes | Yes | Yes | Limited |

| Free Tier | Yes (Beta) | Yes | Limited | No |

🛠️ Technical Deep Dive

- AI Model: Powered by Google's Gemini multimodal models with advanced reasoning capabilities for complex interface generation[1]

- Input Processing: Accepts natural language prompts, hand-drawn sketches, wireframes, and screenshots as input sources[1]

- Output Formats: Generates HTML/CSS, Tailwind CSS, and JSX/React code with clean, production-ready syntax[2]

- Design System Support: Planned features include design token consistency, component library generation, and brand guideline enforcement[1]

- Customization Engine: Supports theme toggling (light/dark), color scheme modification, corner radius adjustment, and font selection[2]

- Prototype Analysis: AI analyzes generated screens to automatically add interactions to interactive elements (buttons, dropdowns, forms)[4]

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

📎 Sources (8)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: ITmedia AI+ (日本) ↗