⚛️Ars Technica•Freshcollected in 4h

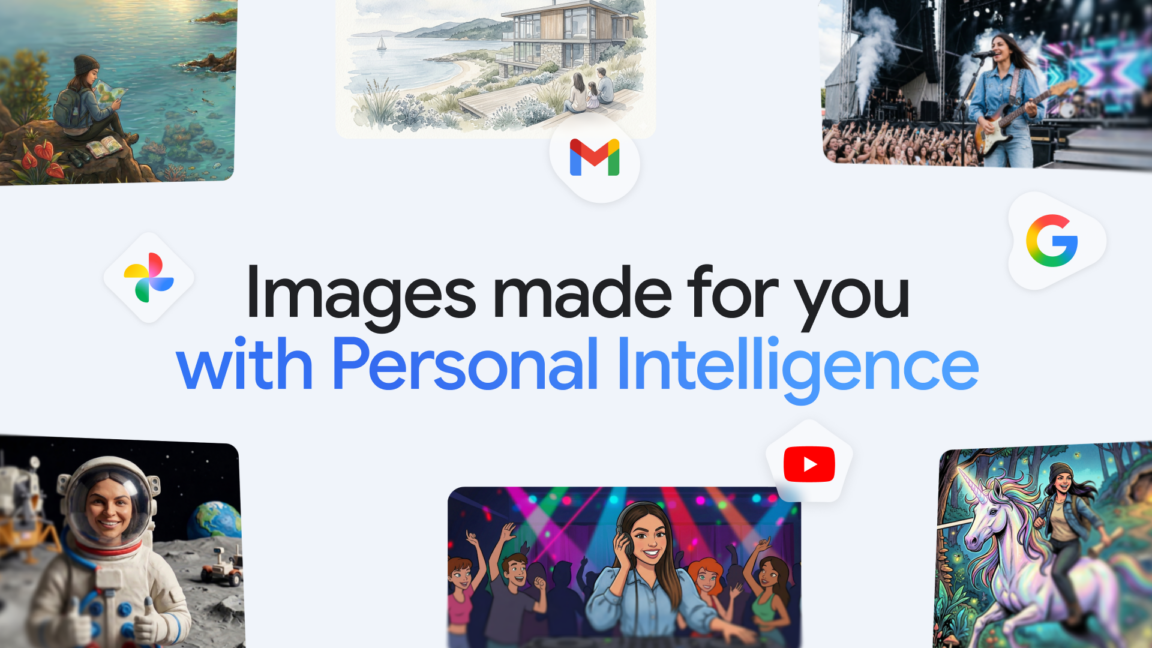

Gemini Integrates Google Photos for Personalized Images

💡Gemini now pulls your photos for custom AI images—key for personalized content workflows.

⚡ 30-Second TL;DR

What Changed

Gemini directly accesses Google Photos for image generation

Why It Matters

This feature boosts Gemini's appeal for creators needing custom visuals from personal media, potentially driving higher user retention. It positions Google ahead in personalized multimodal AI, influencing competitive image gen tools.

What To Do Next

Test Gemini's Google Photos integration by prompting personalized image generations in the app.

Who should care:Creators & Designers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The integration utilizes a new 'Contextual Grounding' layer that allows the Nano Banana model to perform semantic analysis on private photo metadata and visual content without training on the user's raw image data.

- •Privacy controls include a mandatory 'Opt-in' toggle and a granular permission system that allows users to restrict Gemini's access to specific albums or date ranges within Google Photos.

- •The feature is currently limited to Gemini Advanced subscribers and is being rolled out in a phased approach, starting with English-language users in the United States and Canada.

📊 Competitor Analysis▸ Show

| Feature | Gemini (Nano Banana) | OpenAI (ChatGPT/DALL-E) | Midjourney |

|---|---|---|---|

| Personal Library Access | Direct Google Photos Integration | Manual Uploads Required | Manual Uploads Required |

| Pricing | Included in Gemini Advanced | Included in Plus/Team | Subscription-based |

| Personalization | High (Contextual Grounding) | Medium (Prompt-based) | Low (Style-based) |

🛠️ Technical Deep Dive

- •Nano Banana architecture: A multimodal, lightweight transformer model optimized for on-device or edge-cloud hybrid inference.

- •Grounding mechanism: Uses a vector database index of user photo embeddings to retrieve relevant visual features during the inference prompt-processing stage.

- •Privacy architecture: Implements 'Federated-style' processing where the model retrieves visual context via an encrypted API bridge, ensuring the base model weights remain isolated from user-specific visual data.

- •Latency optimization: Employs speculative decoding to reduce the time-to-first-token when generating images based on high-resolution source photos.

🔮 Future ImplicationsAI analysis grounded in cited sources

Google will expand this integration to include Google Drive documents and emails by Q4 2026.

The successful implementation of the Contextual Grounding layer in Photos provides a scalable framework for indexing other personal data silos.

Third-party developers will be granted limited API access to the Nano Banana grounding engine.

Google's historical pattern of opening successful internal AI tools to the developer ecosystem suggests a move toward a 'Personalized AI' platform model.

⏳ Timeline

2023-12

Google announces the Gemini model family, including the initial Nano architecture.

2024-05

Google I/O introduces 'Project Astra' and enhanced multimodal capabilities for Gemini.

2025-09

Google begins internal testing of the Nano Banana model for specialized image generation tasks.

2026-02

Google Photos API is updated to support secure, scoped access for AI-driven generative features.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: Ars Technica ↗