☁️AWS Machine Learning Blog•Stalecollected in 11m

Fast LLM Fine-Tuning with S3 Data

💡Speed up LLM fine-tuning with S3 unstructured data in SageMaker – hands-on guide.

⚡ 30-Second TL;DR

What Changed

S3 integration with SageMaker Unified Studio for unstructured data

Why It Matters

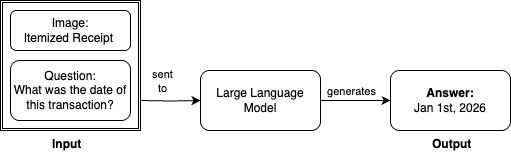

Accelerates LLM development by directly using S3 unstructured data, reducing preprocessing time for teams. Enables faster iteration on vision-language models like VQA, benefiting multimodal AI applications.

What To Do Next

Integrate your S3 unstructured data with SageMaker Unified Studio to fine-tune Llama 3.2 for VQA.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The integration leverages the S3 Express One Zone storage class, which provides single-digit millisecond latency specifically designed for high-performance ML training workloads.

- •SageMaker Unified Studio utilizes a new data connector architecture that allows direct mounting of S3 buckets as POSIX-compliant file systems, eliminating the need for manual data downloading or pre-processing steps.

- •The workflow incorporates automated data validation and schema inference directly from S3, reducing the overhead of preparing unstructured visual datasets for multimodal models like Llama 3.2.

📊 Competitor Analysis▸ Show

| Feature | AWS SageMaker Unified Studio | Google Vertex AI | Azure Machine Learning |

|---|---|---|---|

| S3/Storage Integration | Native S3 Express One Zone integration | Cloud Storage (GCS) integration | Azure Blob Storage integration |

| Model Catalog | SageMaker Catalog (Llama 3.2 focus) | Model Garden (Gemini/Llama focus) | Model Catalog (Llama/Phi focus) |

| Fine-tuning Workflow | Unified Studio (Low-code/No-code) | Vertex AI Pipelines/AutoML | Azure ML Studio/Prompt Flow |

| Pricing Model | Pay-as-you-go (Compute + Storage) | Pay-as-you-go (Compute + Storage) | Pay-as-you-go (Compute + Storage) |

🛠️ Technical Deep Dive

- Architecture: Utilizes SageMaker's distributed training libraries (SMDataParallel) to optimize throughput when reading from S3 buckets.

- Data Handling: Implements a streaming data loader that fetches image-text pairs on-demand, reducing local disk footprint during the fine-tuning of the 11B parameter model.

- Compute: Leverages P5 or P4d instances for the Llama 3.2 11B Vision Instruct fine-tuning, utilizing FP8 precision training to accelerate convergence.

- Integration: The Unified Studio interface abstracts the underlying SageMaker Training Job API, automatically configuring VPC endpoints for secure S3 access.

🔮 Future ImplicationsAI analysis grounded in cited sources

Enterprise adoption of multimodal LLMs will accelerate due to reduced data pipeline complexity.

By removing the bottleneck of data movement between storage and compute, organizations can iterate on custom vision-language models significantly faster.

Cloud providers will increasingly prioritize 'data-centric' AI tooling over 'model-centric' tooling.

The shift toward direct S3-to-training integration indicates that infrastructure providers view data accessibility as the primary competitive differentiator for LLM fine-tuning.

⏳ Timeline

2023-11

AWS announces S3 Express One Zone for high-performance storage.

2024-09

Meta releases Llama 3.2, introducing vision-capable models.

2024-12

AWS launches SageMaker Unified Studio to consolidate ML development workflows.

2026-03

AWS integrates S3 general purpose buckets with SageMaker Unified Studio for streamlined LLM fine-tuning.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗