🐳Docker Blog•Stalecollected in 22m

Docker-Arm Tool Scans Hugging Face for Arm64

💡Verify HF models for Arm64 to optimize AI infra costs (tutorial).

⚡ 30-Second TL;DR

What Changed

Docker and Arm collaboration scans Hugging Face Spaces

Why It Matters

This enables AI practitioners to ensure Hugging Face models run efficiently on cost-effective Arm-based infrastructure like AWS Graviton or Apple Silicon, potentially reducing deployment costs and improving performance.

What To Do Next

Scan your Hugging Face Spaces with Docker MCP Toolkit and Arm MCP Server for Arm64 readiness.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

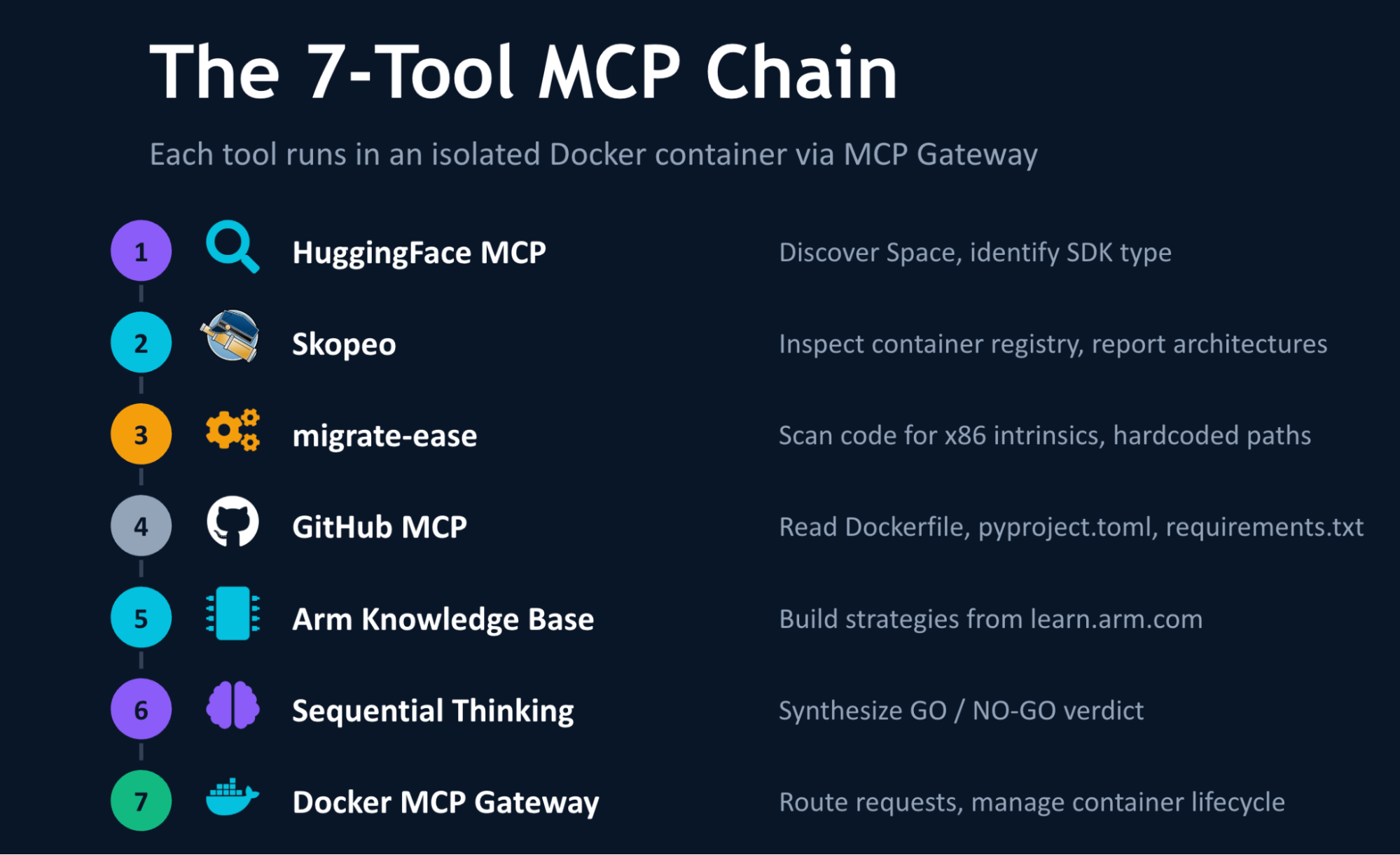

- •The integration utilizes the Model Context Protocol (MCP) to allow LLM-based agents to interact directly with Docker containers and Arm-specific build environments, automating the detection of non-portable instruction sets.

- •This initiative specifically targets the 'Arm64-ification' of the Hugging Face ecosystem to reduce reliance on x86-based cloud instances for inference, aiming to lower operational costs for ML model hosting.

- •The toolchain leverages Docker's multi-arch build capabilities alongside Arm's Neoverse-optimized libraries to provide automated remediation suggestions for codebases previously locked to x86-specific SIMD instructions.

🛠️ Technical Deep Dive

- •Utilizes the Model Context Protocol (MCP) to bridge the gap between AI agents and local/remote Docker daemon environments.

- •Automated scanning pipeline identifies x86-specific intrinsics (e.g., AVX2, AVX-512) within C++ binaries and Python extensions.

- •Maps identified incompatibilities to Arm Neon or SVE (Scalable Vector Extension) equivalents for automated refactoring suggestions.

- •Integrates with Docker Buildx to perform cross-compilation and validation testing on Arm64 runners during the CI/CD process.

🔮 Future ImplicationsAI analysis grounded in cited sources

Cloud providers will see a shift in ML workload distribution toward Arm-based instances.

Automated compatibility scanning reduces the technical barrier for developers to migrate ML inference workloads to more power-efficient Arm64 hardware.

The Model Context Protocol will become the standard interface for AI-driven infrastructure management.

By enabling LLMs to execute and inspect containerized environments, this collaboration sets a precedent for using MCP to automate complex DevOps tasks.

⏳ Timeline

2023-05

Docker announces expanded support for Arm64 development workflows.

2024-11

Anthropic introduces the Model Context Protocol (MCP) to standardize AI-to-data connectivity.

2025-08

Docker integrates initial MCP support into the Docker Desktop developer experience.

2026-02

Docker and Arm announce joint initiative to optimize ML model deployment on Arm64.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: Docker Blog ↗