Dell CEO: AI Memory Demand Surges 625x by 2028

💡Dell warns AI memory shortage looms 625x bigger by 2028—plan infra now!

⚡ 30-Second TL;DR

What Changed

AI accelerator memory demand to increase 625x from 2023 to 2028

Why It Matters

Rising memory demand could drive up AI training and inference costs, delaying deployments. Companies may need to optimize models or diversify suppliers to mitigate shortages.

What To Do Next

Model your 2028 AI cluster memory needs using Dell's forecast and test HBM alternatives.

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The 625x growth projection is primarily driven by the transition from HBM3 to HBM4 and HBM4e standards, which are required to support the massive parameter counts of next-generation Large Language Models (LLMs).

- •Dell is strategically pivoting its supply chain to prioritize 'AI-optimized' server architectures, specifically increasing the ratio of liquid-cooled systems to manage the thermal output of high-density memory configurations.

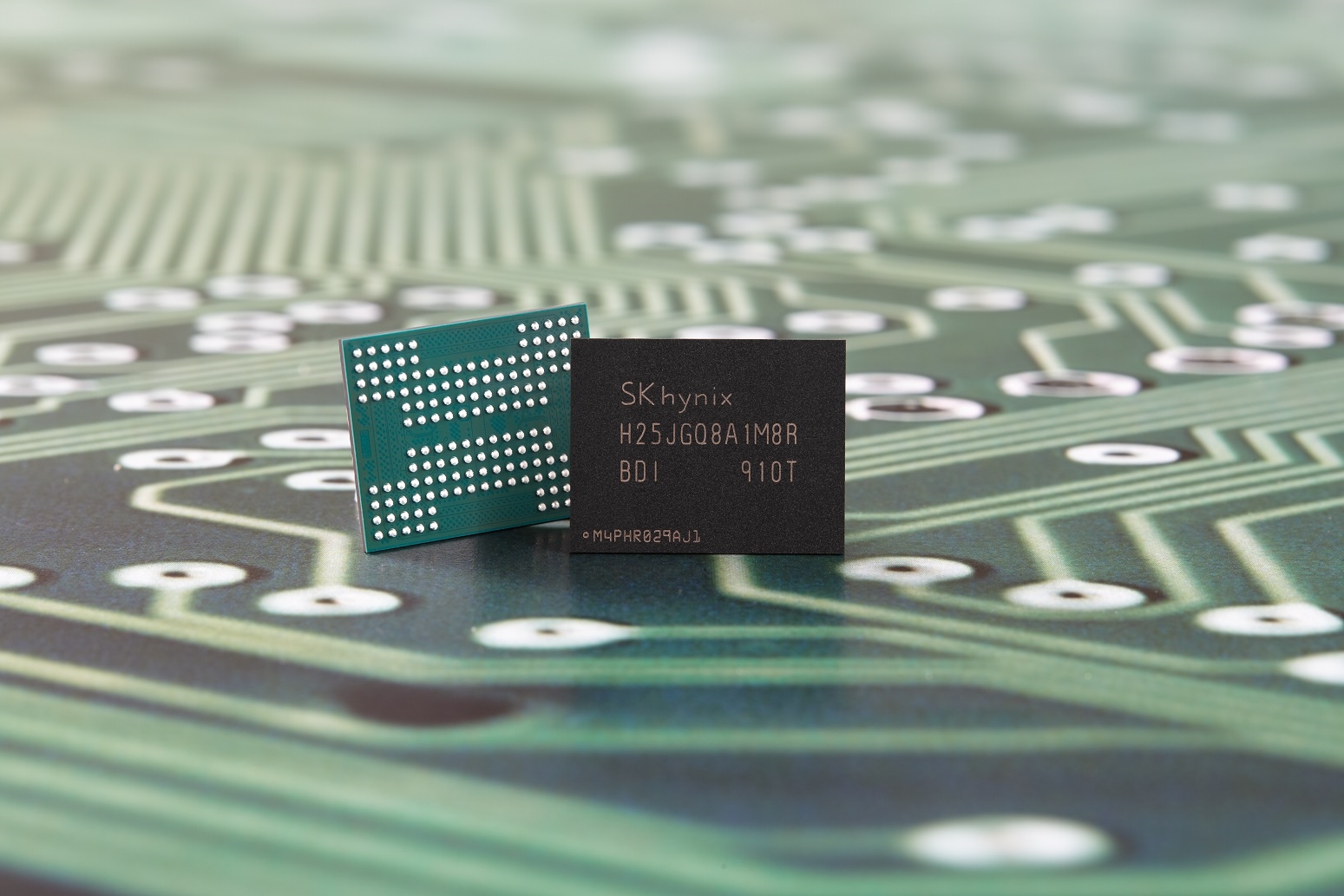

- •Industry analysts note that the bottleneck is not just raw memory production, but the 'CoWoS' (Chip-on-Wafer-on-Substrate) advanced packaging capacity, which remains the primary constraint for integrating high-bandwidth memory with AI accelerators.

🛠️ Technical Deep Dive

• HBM (High Bandwidth Memory) architecture utilizes 3D stacking of DRAM dies connected via TSVs (Through-Silicon Vias) to achieve massive memory bandwidth. • The shift toward HBM4 involves moving to a 2048-bit wide interface, doubling the width of HBM3e, to accommodate the increased data throughput required by Blackwell-class and future AI GPUs. • Dell's infrastructure strategy focuses on 'PowerEdge XE' series servers, which are designed to support higher TDP (Thermal Design Power) envelopes necessitated by these memory-dense configurations.

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: cnBeta (Full RSS) ↗