💰钛媒体•Freshcollected in 27m

DeepSeek V4 Delay Fuels De-CUDA Fire

💡DeepSeek V4 delay boosts CUDA alternatives—critical for AI infra!

⚡ 30-Second TL;DR

What Changed

DeepSeek V4 postponement to 2026 release window.

Why It Matters

Accelerates CUDA alternatives amid US chip restrictions. Empowers Chinese AI firms and global open-source inference.

What To Do Next

Test vLLM or SGLang for CUDA-independent LLM inference today.

Who should care:Researchers & Academics

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The delay is reportedly linked to severe supply chain constraints regarding high-bandwidth memory (HBM) and advanced packaging capacity, which are critical for scaling DeepSeek's Mixture-of-Experts (MoE) architecture.

- •Industry analysts suggest the 'de-CUDA' push is driven by the tightening of US export controls on high-end AI chips, forcing Chinese labs to optimize training stacks for domestic NPUs and GPUs.

- •DeepSeek's engineering team is reportedly pivoting to a hardware-agnostic training framework to mitigate reliance on NVIDIA's proprietary software stack, a move that has introduced significant stability challenges during the V4 training phase.

📊 Competitor Analysis▸ Show

| Feature | DeepSeek V4 (Projected) | Qwen-Max (Alibaba) | Llama 4 (Meta) |

|---|---|---|---|

| Architecture | MoE (Optimized) | Dense/MoE Hybrid | Dense/MoE Hybrid |

| Training Stack | Heterogeneous/De-CUDA | CUDA-Optimized | CUDA-Native |

| Primary Market | China/Global Open | China/Enterprise | Global Open |

| Benchmark Focus | Reasoning/Efficiency | Multimodal/Coding | General/Agentic |

🛠️ Technical Deep Dive

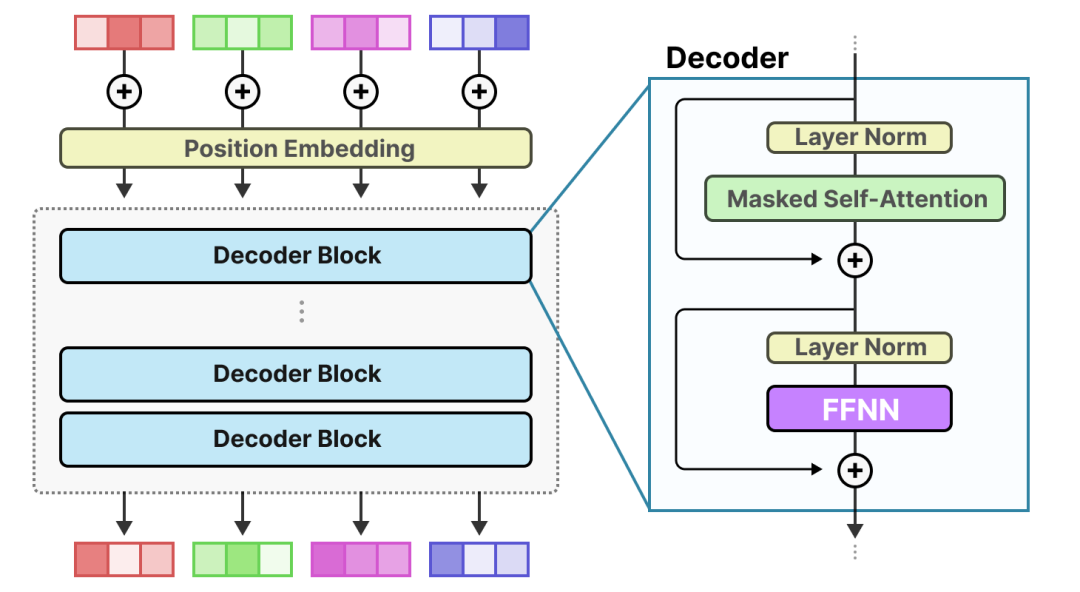

- •Architecture: Expected to utilize an evolved Mixture-of-Experts (MoE) design with increased parameter count and refined routing mechanisms to improve inference efficiency.

- •Training Infrastructure: Transitioning from pure NVIDIA H100/A100 clusters to a hybrid environment incorporating domestic Chinese accelerators (e.g., Huawei Ascend series).

- •Software Stack: Development of custom kernels to replace CUDA-specific operations, focusing on collective communication primitives optimized for non-NVIDIA interconnects.

🔮 Future ImplicationsAI analysis grounded in cited sources

DeepSeek will release a hardware-agnostic training framework by Q4 2026.

The current development bottleneck necessitates a proprietary software layer to maintain performance parity across heterogeneous hardware clusters.

Chinese AI labs will see a 20% increase in training costs due to de-CUDA efforts.

The engineering overhead required to optimize models for non-NVIDIA hardware significantly increases compute-hour consumption and development time.

⏳ Timeline

2024-01

DeepSeek releases V2, marking a significant milestone in MoE efficiency.

2024-12

DeepSeek V3 launch, demonstrating competitive performance with top-tier global models.

2025-09

Initial internal targets for DeepSeek V4 release are missed, signaling supply chain issues.

2026-02

DeepSeek publicly acknowledges technical challenges in scaling training across heterogeneous hardware.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗