🇨🇳cnBeta (Full RSS)•Stalecollected in 52m

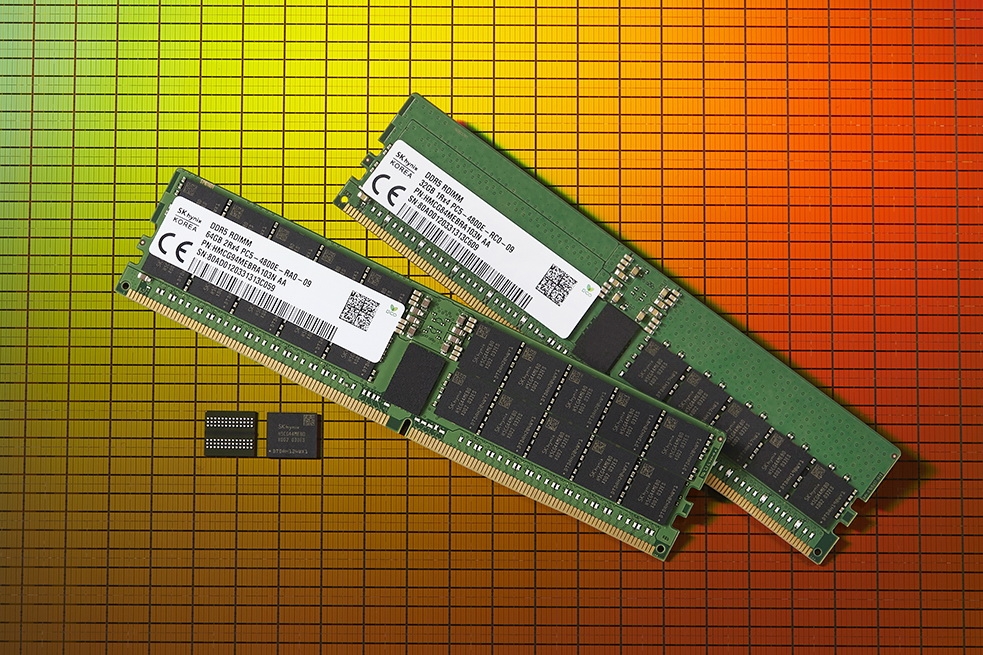

DDR5 Prices Drop Up to 29% on Amazon and Newegg

💡DDR5 down 29%—prime time to bolster AI infra memory costs

⚡ 30-Second TL;DR

What Changed

DDR5 prices fall up to 29% on major US platforms

Why It Matters

Cheaper DDR5 lowers barriers for scaling AI training clusters with high-bandwidth memory needs. Could accelerate infrastructure upgrades amid compression tech advances.

What To Do Next

Scan Newegg for DDR5 deals to upgrade AI server RAM capacity now.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Market analysts attribute the price volatility to a sudden inventory surplus caused by major OEMs shifting focus toward HBM4 (High Bandwidth Memory) production for AI server clusters.

- •Google's TurboQuant technology, while cited as a factor, is specifically optimized for LLM inference workloads, effectively reducing the physical DRAM capacity requirements for edge-AI devices by up to 40%.

- •Retailers are aggressively clearing DDR5 inventory to make shelf space for the upcoming JEDEC-standardized DDR6 modules expected to enter mass production in Q3 2026.

🛠️ Technical Deep Dive

Google TurboQuant is a lossy compression algorithm designed for memory-constrained AI inference:

- Quantization Strategy: Utilizes non-linear, adaptive bit-width quantization (ranging from 2-bit to 6-bit) to compress model weights stored in DRAM.

- Hardware Integration: Operates via a dedicated memory controller firmware update that intercepts data requests, decompressing weights on-the-fly within the memory controller's cache.

- Latency Impact: Introduces a sub-5ns latency penalty, which is offset by the reduction in bus traffic and increased effective bandwidth per clock cycle.

🔮 Future ImplicationsAI analysis grounded in cited sources

DDR5 consumer pricing will stabilize at a 15-20% lower baseline by Q4 2026.

The transition to DDR6 will force legacy DDR5 inventory into permanent clearance cycles to maintain market relevance.

TurboQuant adoption will lead to a decline in demand for high-capacity consumer RAM kits.

As compression efficiency improves, users will require less physical DRAM to run local AI models, reducing the necessity for 64GB+ consumer kits.

⏳ Timeline

2024-11

JEDEC finalizes DDR5-8800 speed bin specifications.

2025-06

Google announces initial research into TurboQuant memory compression for edge AI.

2026-01

Major DRAM manufacturers announce shift of production capacity toward HBM3e and HBM4.

2026-03

Google releases TurboQuant firmware update for select enterprise and consumer chipsets.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: cnBeta (Full RSS) ↗