☁️AWS Machine Learning Blog•Stalecollected in 18m

Cost-Efficient Text-to-SQL with Nova Micro

💡Unlock cheap, production-ready text-to-SQL via Nova Micro fine-tuning on Bedrock

⚡ 30-Second TL;DR

What Changed

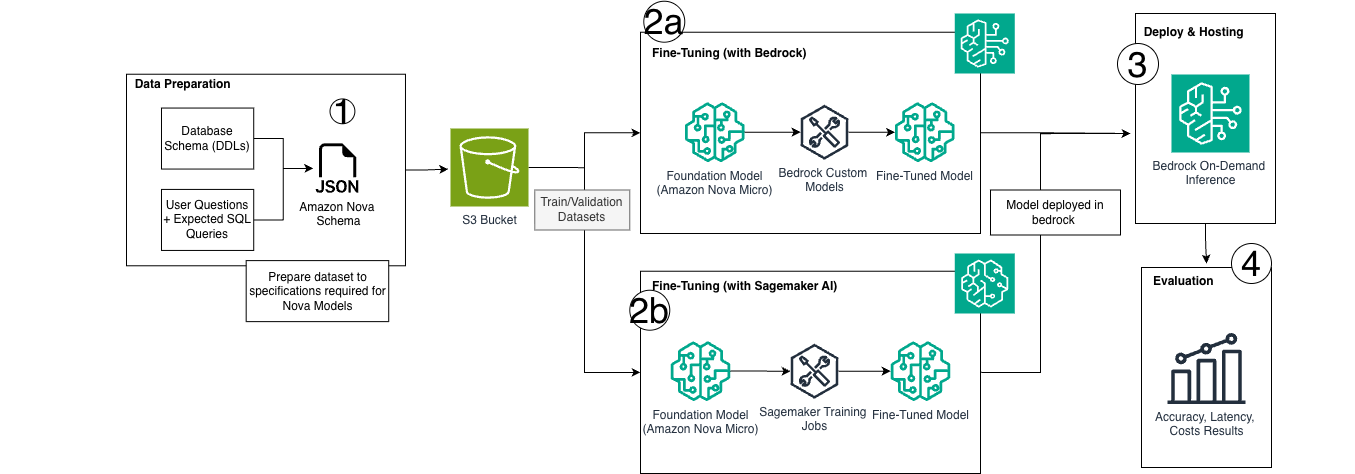

Two fine-tuning methods for custom SQL dialects

Why It Matters

Developers can deploy affordable, high-performance text-to-SQL models, reducing infrastructure costs while scaling to production workloads in data-heavy applications.

What To Do Next

Fine-tune Amazon Nova Micro on your SQL dataset using Bedrock's on-demand inference.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Amazon Nova Micro is positioned as a lightweight, high-throughput model specifically optimized for low-latency tasks, distinguishing it from the larger Nova Pro and Premier variants in the Amazon Nova foundation model family.

- •The fine-tuning approaches leverage Amazon Bedrock's managed fine-tuning service, which allows users to create custom model versions without managing underlying infrastructure, directly addressing data privacy and security requirements for enterprise database schemas.

- •The cost-efficiency model relies on the architectural design of Nova Micro, which utilizes a smaller parameter count to reduce token-per-second latency and inference costs compared to general-purpose LLMs when applied to structured SQL generation tasks.

📊 Competitor Analysis▸ Show

| Feature | Amazon Nova Micro | GPT-4o-mini | Claude 3 Haiku |

|---|---|---|---|

| Primary Use Case | Enterprise SQL/Structured Data | General Purpose/Low Latency | Low Latency/High Throughput |

| Fine-tuning | Supported via Bedrock | Supported via OpenAI API | Supported via Bedrock/Anthropic |

| Pricing Model | On-demand/Provisioned | On-demand/Batch | On-demand/Provisioned |

| SQL Benchmarks | Optimized for custom dialects | Strong zero-shot SQL | Strong zero-shot SQL |

🛠️ Technical Deep Dive

- Model Architecture: Nova Micro is a multimodal foundation model designed for high-speed, low-latency inference, utilizing a distilled architecture optimized for instruction-following in structured data environments.

- Fine-tuning Mechanism: Utilizes Amazon Bedrock's fine-tuning API, which supports supervised fine-tuning (SFT) on custom datasets, allowing for the injection of proprietary SQL dialect syntax and schema-specific patterns.

- Inference Optimization: Supports both on-demand throughput and provisioned throughput, enabling predictable performance for high-volume text-to-SQL applications.

- Context Window: Optimized for short-to-medium context lengths typical of database schema definitions and query generation prompts.

🔮 Future ImplicationsAI analysis grounded in cited sources

Enterprise adoption of specialized small language models (SLMs) for SQL will outpace general-purpose LLMs by 2027.

The combination of lower inference costs and higher accuracy on proprietary schemas provides a clear ROI advantage for internal data tooling.

Amazon Bedrock will integrate automated schema-to-prompt mapping for Nova models.

Reducing the manual effort required to prepare database metadata for fine-tuning is the next logical step in simplifying the text-to-SQL pipeline.

⏳ Timeline

2024-12

AWS announces the Amazon Nova foundation model family, including Nova Micro.

2025-03

Amazon Bedrock expands fine-tuning capabilities for the Nova model series.

2026-04

AWS publishes technical guidance on optimizing Nova Micro for custom SQL dialect generation.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗