🛡️Cloudflare Blog•Stalecollected in 5h

Cloudflare's Flagship: AI-Age Feature Flags

💡Sub-ms edge feature flags cut AI rollout latency vs. third-parties (Cloudflare)

⚡ 30-Second TL;DR

What Changed

Native service on Cloudflare’s global network

Why It Matters

This reduces rollout friction for AI features, enabling faster experimentation and safer deployments at the edge. AI practitioners gain low-latency control over model variants and A/B tests globally.

What To Do Next

Deploy Flagship on Cloudflare to A/B test your AI model variants with sub-ms latency.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Flagship integrates directly with Cloudflare Workers, allowing developers to perform A/B testing and canary releases without modifying application code or adding external SDK overhead.

- •The service leverages Cloudflare's 'Smart Placement' to automatically move flag evaluation logic closer to the user, further reducing latency for globally distributed AI inference workloads.

- •Flagship includes built-in observability dashboards that correlate flag state changes with AI model performance metrics, such as token generation latency and error rates.

📊 Competitor Analysis▸ Show

| Feature | Cloudflare Flagship | LaunchDarkly | Statsig |

|---|---|---|---|

| Architecture | Edge-native (Workers) | SDK-based (Client/Server) | SDK/API-based |

| Latency | Sub-millisecond (Global) | Network-dependent | Network-dependent |

| AI Integration | Native (Model inference) | Limited | Moderate |

| Pricing | Usage-based (Workers) | Tiered/Seat-based | Tiered/Volume-based |

🛠️ Technical Deep Dive

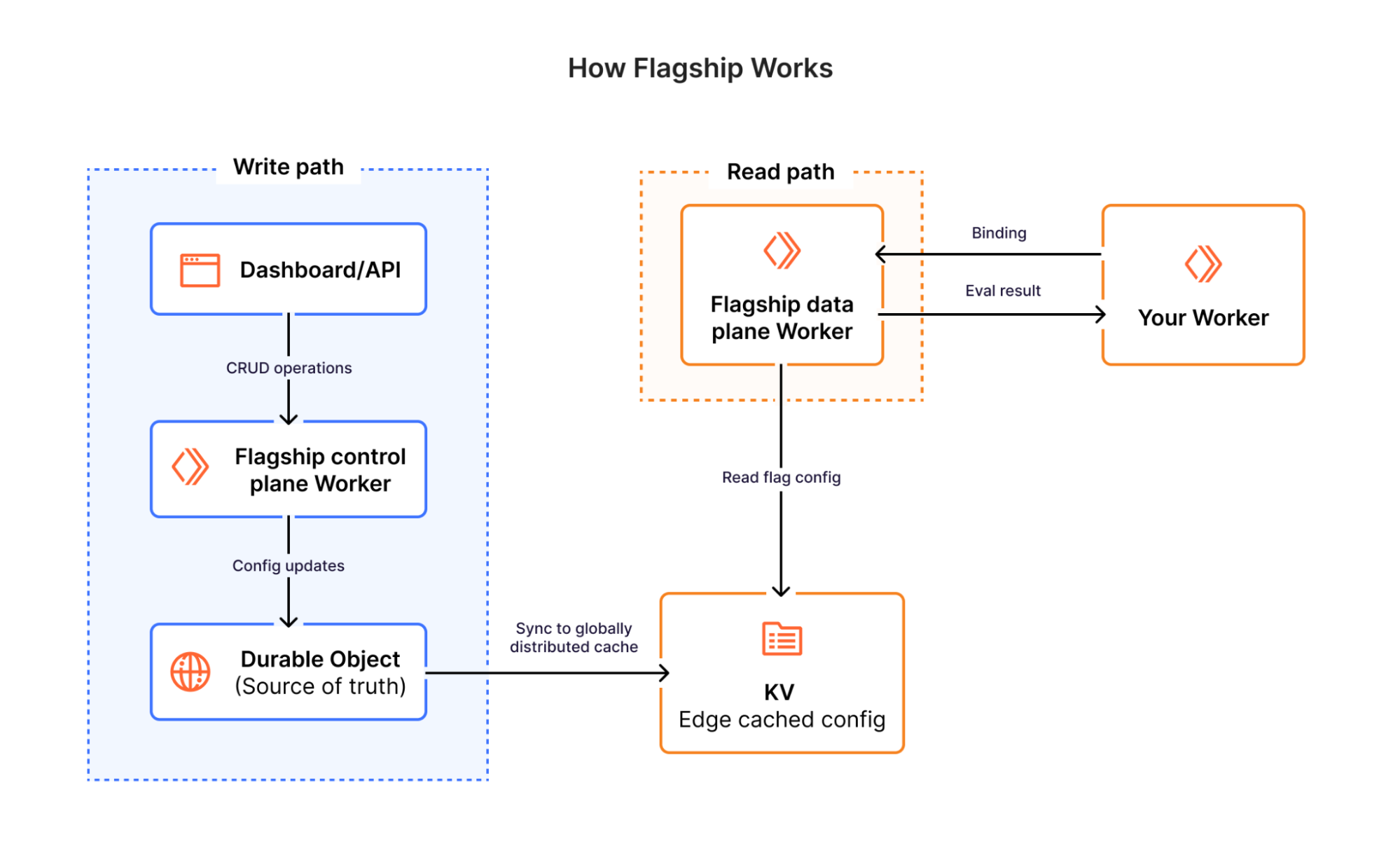

- Evaluation Engine: Utilizes Cloudflare Workers runtime to execute flag logic at the edge, bypassing the need for external API calls to a centralized feature management server.

- Data Consistency: Employs Cloudflare KV for high-read, low-latency flag configuration distribution and Durable Objects for strong consistency in stateful flag scenarios (e.g., sticky sessions for AI model variants).

- Integration: Exposes a lightweight API for Workers, enabling developers to wrap AI model calls with conditional logic based on flag state without blocking the event loop.

- Caching: Implements a multi-tier caching strategy where flag configurations are pushed to the edge nodes, ensuring zero-latency retrieval during the request lifecycle.

🔮 Future ImplicationsAI analysis grounded in cited sources

Cloudflare will likely integrate Flagship with its AI Gateway to enable automated model routing.

By combining feature flags with AI Gateway metrics, Cloudflare can dynamically route traffic to different LLM providers based on real-time performance or cost-efficiency.

Flagship will become a primary driver for Cloudflare's 'Serverless AI' adoption.

Lowering the barrier to testing and deploying AI model variations encourages developers to experiment more frequently within the Cloudflare ecosystem.

⏳ Timeline

2023-09

Cloudflare launches AI Gateway to manage and monitor AI application traffic.

2024-05

Cloudflare expands Workers AI to support more models and global inference.

2026-04

Cloudflare officially launches Flagship, integrating feature management into the edge network.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: Cloudflare Blog ↗