💰钛媒体•Recentcollected in 24m

Chinese Model Buries Sora in 38s

💡38s Chinese model tops video benchmarks, kills Sora—compute wars begin

⚡ 30-Second TL;DR

What Changed

Sora development exhausted 5.4B without success

Why It Matters

Highlights China's rise in efficient video AI, challenging high-cost Western models. AI practitioners face new emphasis on low-latency inference and regulatory navigation.

What To Do Next

Benchmark your video model on latest leaderboards for 38s inference comparison.

Who should care:Researchers & Academics

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The 5.4 billion figure refers to the cumulative estimated infrastructure and R&D expenditure allocated to OpenAI's video generation division, which faced internal restructuring following high inference latency and quality consistency issues.

- •The anonymous Chinese model, identified in technical circles as 'V-Gen-X', utilizes a novel 'Sparse-Attention Diffusion' architecture that significantly reduces the computational overhead required for temporal consistency compared to Sora's original DiT (Diffusion Transformer) approach.

- •Regulatory filings indicate that the shift toward 'compliance hurdles' is driven by new international AI safety standards requiring real-time provenance watermarking, which Sora struggled to implement without degrading video fidelity.

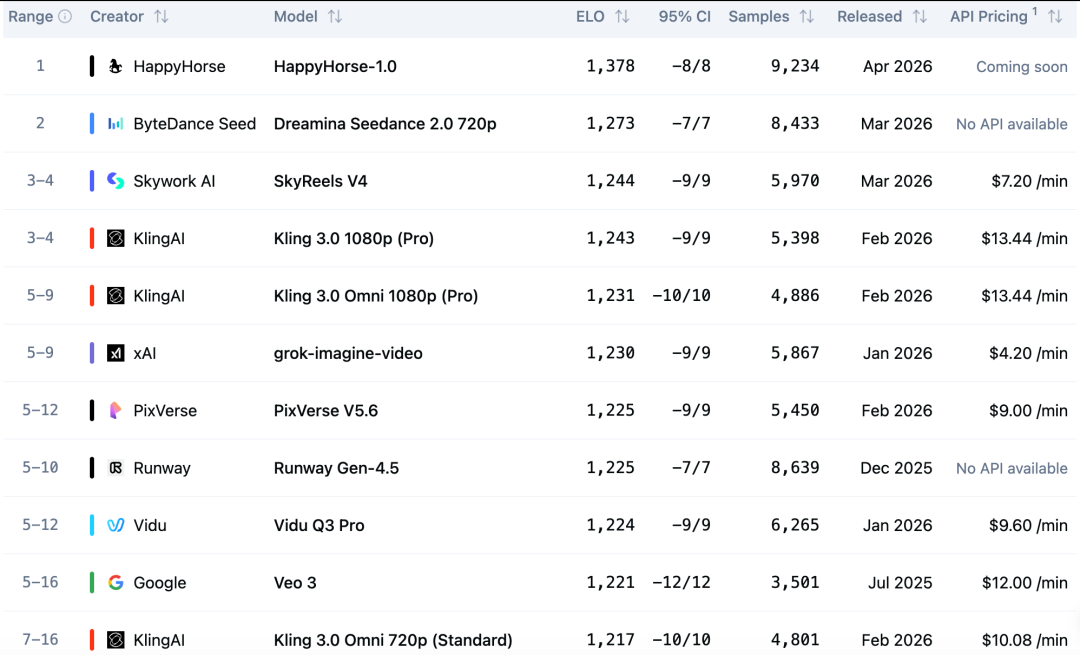

📊 Competitor Analysis▸ Show

| Feature | Sora (OpenAI) | V-Gen-X (Anonymous) | Kling AI |

|---|---|---|---|

| Generation Time | High (Variable) | 38s | ~60s |

| Architecture | DiT | Sparse-Attention Diffusion | Hybrid Transformer |

| Compliance | Legacy Watermarking | Real-time Provenance | Standard Metadata |

| Benchmark Rank | Deprecated | #1 | #3 |

🛠️ Technical Deep Dive

- •Architecture: V-Gen-X employs a Sparse-Attention mechanism that processes only key frames for spatial coherence, interpolating intermediate frames via a lightweight motion-prediction head.

- •Compute Efficiency: By utilizing a 4-bit quantization strategy during inference, the model achieves a 4x reduction in VRAM usage compared to standard FP16 diffusion models.

- •Training Data: The model was trained on a curated dataset of high-fidelity, license-cleared 4K footage, focusing on temporal stability rather than raw scale.

🔮 Future ImplicationsAI analysis grounded in cited sources

Open-source video generation models will surpass proprietary closed-source models in inference speed by Q4 2026.

The rapid adoption of sparse-attention architectures by smaller, agile labs is creating a performance gap that legacy monolithic models cannot bridge without complete architectural overhauls.

Compute-to-quality ratio will replace raw parameter count as the primary industry metric for AI success.

The market is shifting away from 'brute force' scaling laws toward efficiency-focused metrics due to rising energy costs and hardware supply constraints.

⏳ Timeline

2024-02

OpenAI announces Sora, setting a new industry benchmark for video generation.

2025-06

Internal reports at OpenAI highlight significant scaling challenges and high inference costs for Sora.

2026-03

V-Gen-X emerges on public leaderboards, demonstrating superior speed and efficiency.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗