💰钛媒体•Stalecollected in 15m

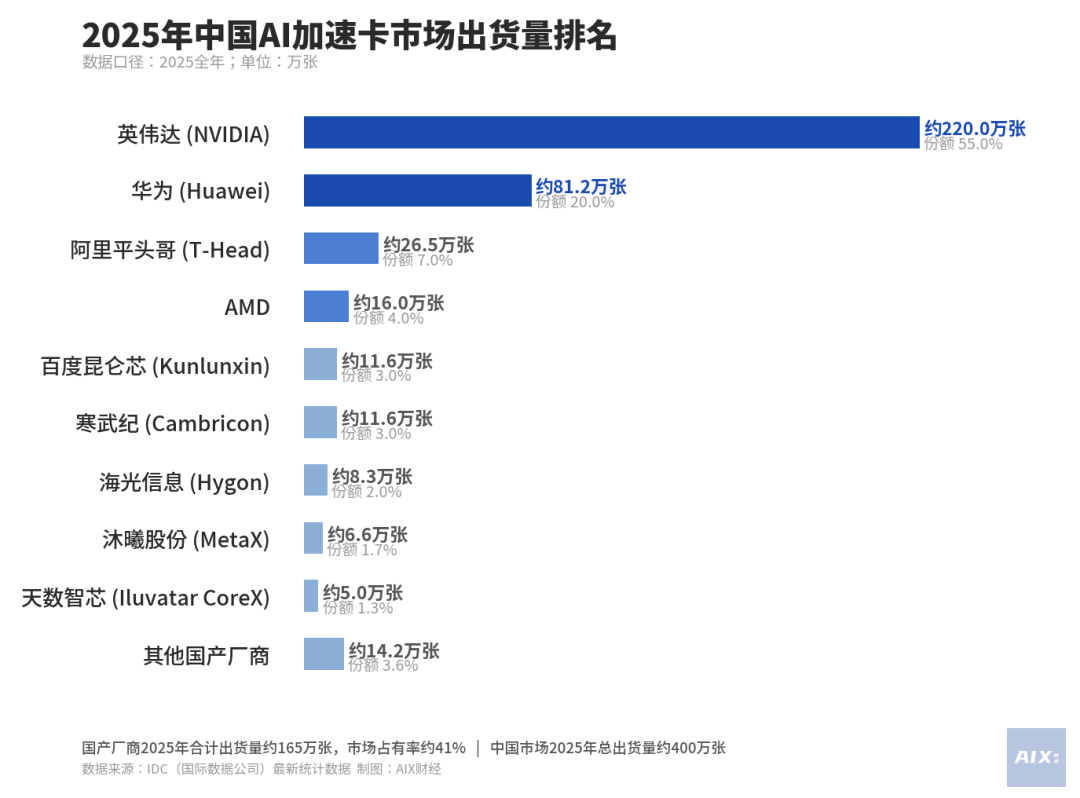

Chinese AI Chips Rise Against Nvidia

💡China's chip factions splitting Nvidia's share—key for hardware diversification.

⚡ 30-Second TL;DR

What Changed

Three major Chinese AI chip factions gaining traction

Why It Matters

Intensifies global AI chip competition, potentially cutting costs and easing supply chain risks for AI deployments outside US dominance.

What To Do Next

Benchmark chips from China's top three AI factions versus Nvidia H100 for inference efficiency.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The three factions are categorized by their origin: tech giants (e.g., Huawei/Ascend), specialized AI chip startups (e.g., Biren Technology, Moore Threads), and state-backed research institutes, each targeting different segments of the training and inference market.

- •US export controls on high-end GPUs like the H100 and A100 have acted as a catalyst, forcing Chinese cloud providers and enterprises to adopt domestic silicon to ensure supply chain continuity.

- •Software ecosystem maturity remains the primary bottleneck; while hardware performance is closing the gap, Chinese manufacturers are heavily investing in proprietary software stacks to improve compatibility with PyTorch and TensorFlow.

📊 Competitor Analysis▸ Show

| Feature | Nvidia H20 (China-specific) | Huawei Ascend 910B | Biren BR100 |

|---|---|---|---|

| Architecture | Hopper (Cut-down) | Da Vinci | Big Island |

| FP16/BF16 TFLOPS | ~148 | ~320 | ~512 |

| Memory Bandwidth | 900 GB/s | 1.2 TB/s | 2 TB/s |

| Software Stack | CUDA | CANN | BIRENSUPA |

🛠️ Technical Deep Dive

- •Huawei Ascend 910B utilizes a 7nm process node and is optimized for large-scale cluster training, supporting high-speed interconnects (HCCS) to rival NVLink.

- •Biren Technology's BR100 employs a chiplet architecture, allowing for higher yields and scalability in high-performance computing (HPC) workloads.

- •Most domestic chips are shifting focus from pure raw compute to high-bandwidth memory (HBM) integration to mitigate the memory wall bottleneck in LLM training.

- •Development of 'CANN' (Compute Architecture for Neural Networks) by Huawei serves as the direct alternative to CUDA, providing a library of operators specifically tuned for Ascend hardware.

🔮 Future ImplicationsAI analysis grounded in cited sources

Domestic market share for AI training chips in China will exceed 40% by 2027.

Increasingly stringent US export restrictions are compelling domestic firms to prioritize local procurement regardless of initial performance gaps.

Nvidia will lose its monopoly on the Chinese LLM training market.

The rapid maturation of domestic software stacks like CANN is lowering the switching costs for Chinese AI labs.

⏳ Timeline

2022-08

US government imposes initial restrictions on the export of high-end AI chips to China.

2023-10

US expands export controls, effectively banning the sale of Nvidia's A800 and H800 chips to China.

2024-03

Huawei reports significant scaling of Ascend 910B production to meet domestic demand.

2025-06

Major Chinese cloud providers announce large-scale migration of inference workloads to domestic chip architectures.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗