💰钛媒体•Freshcollected in 41m

Can't Afford Tokens in AI Era

💡AI token costs tier users like early internet – budget wisely now.

⚡ 30-Second TL;DR

What Changed

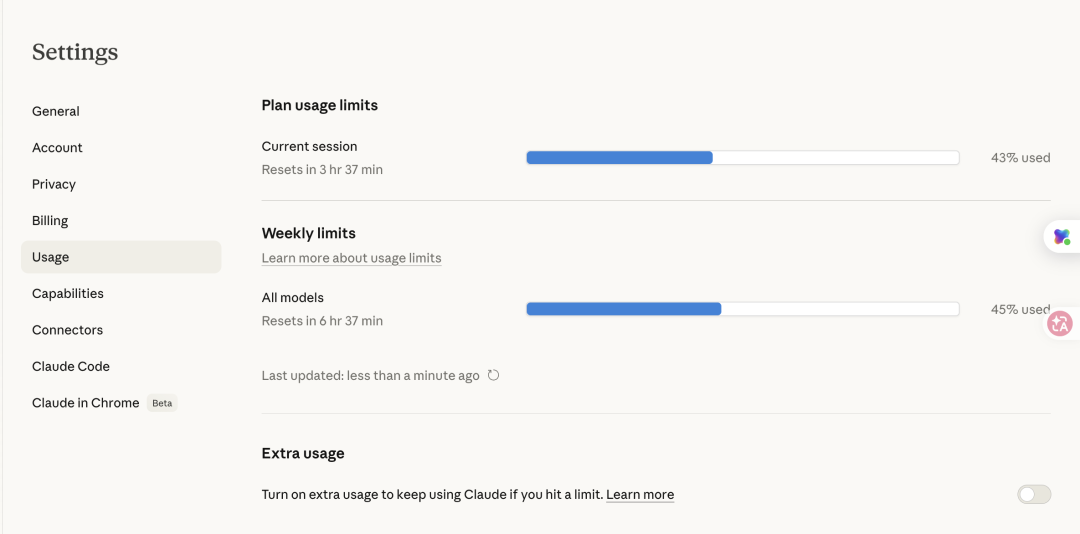

High token usage leads to unexpected overage fees.

Why It Matters

Exposes accessibility barriers in AI, widening gaps between high-volume and casual users.

What To Do Next

Track personal token spend via OpenAI dashboard to avoid overages.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The 'token economy' has shifted from simple input/output billing to complex multi-modal pricing, where image generation and long-context retrieval incur significantly higher hidden costs than standard text processing.

- •Enterprises are increasingly adopting 'token-budgeting' software to prevent runaway API costs, mirroring the evolution of cloud-spend management tools (FinOps) that emerged during the early AWS adoption era.

- •The industry is seeing a pivot toward 'token-efficient' model architectures, such as Mixture-of-Experts (MoE) and speculative decoding, specifically designed to reduce the compute-per-token ratio for high-volume users.

🔮 Future ImplicationsAI analysis grounded in cited sources

Token-based pricing will be largely replaced by flat-rate subscription models for enterprise-grade AI services by 2027.

Predictable budgeting requirements for corporate procurement departments are forcing providers to move away from volatile, usage-based billing.

Local edge-AI inference will capture 30% of the consumer market share for routine tasks to bypass cloud token costs.

As hardware NPU performance increases, users will prioritize running models locally to eliminate recurring per-token fees for non-sensitive tasks.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗