ByteDance Seedance 2.0 Ignites Short-Sell Rumors

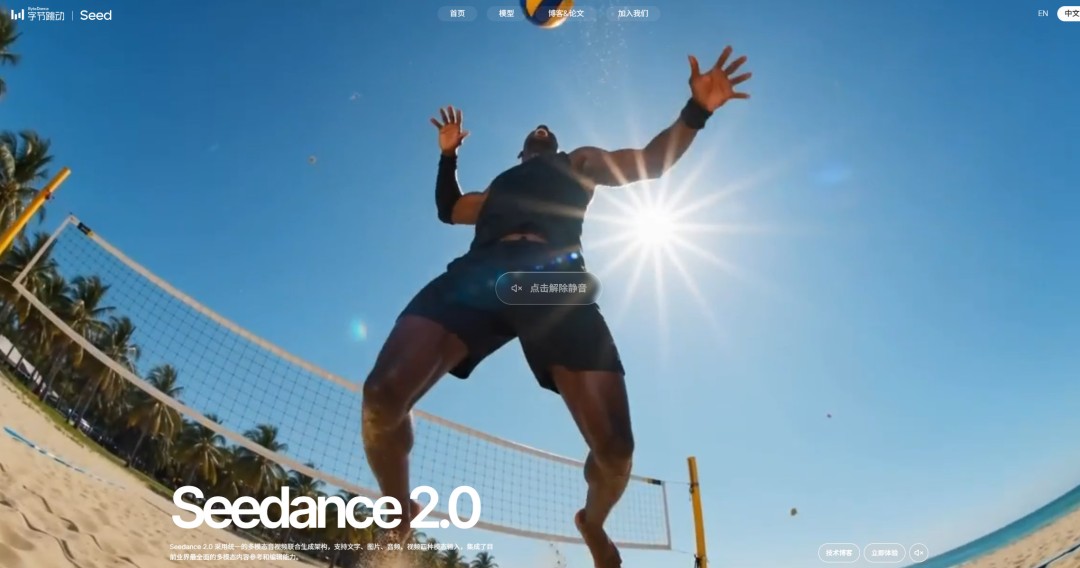

💡ByteDance's viral Seedance 2.0 video model denies short-selling rumors—key for tracking Chinese AI momentum

⚡ 30-Second TL;DR

What Changed

ByteDance launched Seedance 2.0 video generation model before Spring Festival

Why It Matters

Reinforces ByteDance's AI leadership amid market skepticism, potentially stabilizing investor sentiment around Chinese tech AI firms.

What To Do Next

Test Seedance 2.0 for video generation to benchmark against Sora on creativity and speed.

🧠 Deep Insight

Web-grounded analysis with 4 cited sources.

🔑 Enhanced Key Takeaways

- •Seedance 2.0 was officially launched on February 12, 2026, as ByteDance's next-generation video creation model with unified multimodal audio-video joint generation architecture[1]

- •The model supports four input modalities (text, image, audio, video) and allows simultaneous input of up to 9 images, 3 video clips, and 3 audio clips with natural language instructions[1]

- •Within days of launch, Seedance 2.0 generated viral content including reimagined TV show characters and deepfake-style videos of celebrities, triggering immediate copyright infringement concerns from major studios[2]

- •Disney sent a cease-and-desist letter on February 13, 2026, and Paramount followed on February 16, 2026, with both alleging unauthorized use of copyrighted works and intellectual property[2]

- •ByteDance announced on February 16, 2026, that it respects intellectual property rights and would strengthen safeguards to prevent IP violations, following industry backlash[2]

📊 Competitor Analysis▸ Show

| Feature | Seedance 2.0 | Comparable Competitors |

|---|---|---|

| Input Modalities | Text, image, audio, video | Text-to-video models typically support text and image |

| Simultaneous Inputs | Up to 9 images, 3 videos, 3 audio clips | Most competitors support fewer simultaneous inputs |

| Output Length | 15-second high-quality multi-shot sequences | Industry standard varies (5-60 seconds) |

| Audio-Video Integration | Joint generation with dual-channel audio | Separate audio-video generation common in competitors |

| Editing Capabilities | Targeted clip, character, action, storyline modifications | Advanced editing features less common in competitors |

| Video Extension | Continuous shot generation from prompts | Feature availability varies across competitors |

🛠️ Technical Deep Dive

• Architecture: Unified multimodal audio-video joint generation architecture enabling synchronized audio-visual content creation • Input Processing: Supports simultaneous processing of up to 9 images, 3 video clips, 3 audio clips, plus natural language instructions • Reference Capabilities: Can reference composition, motion, camera movement, visual effects, and audio elements from input assets • Motion Stability: Significant improvements in motion stability and instruction-following performance compared to previous versions • Output Specifications: Generates 15-second high-quality multi-shot sequences with dual-channel audio for realistic audio-visual experiences • Editing Functions: Supports targeted modifications to specified clips, characters, actions, and storylines; includes video extension functionality for continuous shot generation • Performance Metrics: Leads across multiple dimensions in SeedVideoBench-2.0 evaluation for text-to-video, image-to-video, and multimodal tasks • Visual Quality: Accurately captures high-tension large-scale action alongside subtle micro-expressions with professional-grade camera movement and narrative pacing control

🔮 Future ImplicationsAI analysis grounded in cited sources

Seedance 2.0's rapid viral adoption and sophisticated content generation capabilities signal a significant acceleration in AI-driven video creation, potentially disrupting traditional Hollywood production workflows. However, the immediate copyright infringement backlash from Disney, Paramount, and the Motion Picture Association indicates that regulatory and legal frameworks for AI-generated content remain underdeveloped. The model's availability through CapCut (ByteDance's global video editor) suggests potential widespread adoption, which could intensify industry pressure for AI training transparency and licensing requirements. Screenwriter Rhett Reese's public concern that "one person [will] be able to sit at a computer and create a movie indistinguishable from what Hollywood now releases" reflects broader industry anxiety about AI's impact on creative employment and content authenticity. ByteDance's February 16 commitment to strengthen IP safeguards may establish precedent for how AI companies address copyright concerns, though enforcement mechanisms remain unclear. The short-seller rumors mentioned in the article context may reflect market uncertainty about ByteDance's long-term valuation amid regulatory scrutiny and IP litigation risks.

⏳ Timeline

📎 Sources (4)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: cnBeta (Full RSS) ↗