☁️AWS Machine Learning Blog•Stalecollected in 29m

Bedrock RFT with OpenAI-Compatible APIs Walkthrough

💡Master RFT on Bedrock with OpenAI APIs: full technical guide for devs.

⚡ 30-Second TL;DR

What Changed

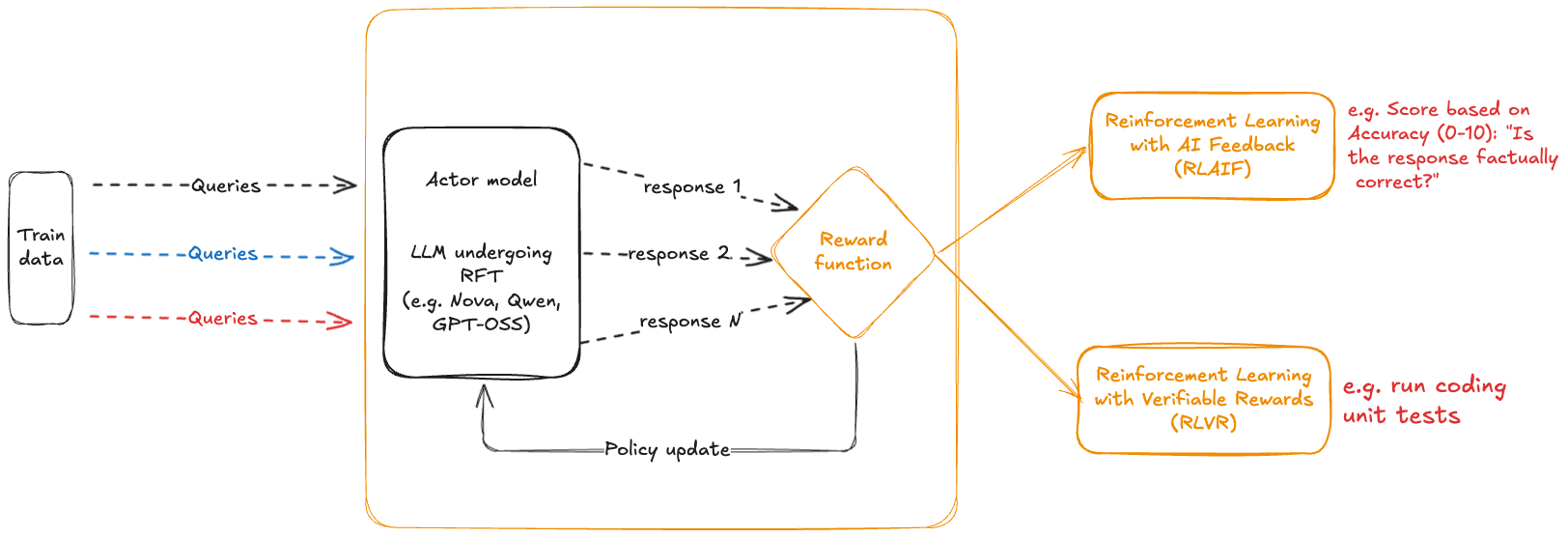

End-to-end RFT workflow on Bedrock with OpenAI-compatible APIs

Why It Matters

Enables developers to leverage advanced RLHF techniques on Bedrock using familiar OpenAI APIs, bridging AWS and OpenAI ecosystems for easier fine-tuning.

What To Do Next

Deploy a Lambda-based reward function on Bedrock to kick off your first RFT job.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The integration leverages the OpenAI-compatible API layer to allow developers to migrate existing fine-tuning pipelines to Bedrock with minimal code changes, effectively abstracting the underlying AWS infrastructure.

- •The Lambda-based reward function architecture enables custom, domain-specific alignment criteria beyond standard RLHF, allowing for real-time evaluation of model outputs against business-specific KPIs during the training loop.

- •This workflow supports parameter-efficient fine-tuning (PEFT) techniques, significantly reducing the compute overhead and time-to-market compared to full-parameter fine-tuning for large-scale models.

📊 Competitor Analysis▸ Show

| Feature | Amazon Bedrock RFT | Google Vertex AI Tuning | Azure OpenAI Service Fine-Tuning |

|---|---|---|---|

| API Compatibility | OpenAI-compatible | Native/OpenAI-compatible | Native/OpenAI-compatible |

| Reward Function | Custom Lambda-based | Vertex AI Pipelines/Custom | Limited/Managed RLHF |

| Model Support | Multi-model (Titan, Claude, etc.) | Gemini/PaLM | GPT-4o/GPT-4/GPT-3.5 |

🛠️ Technical Deep Dive

- •Utilizes the Bedrock Model Customization API to orchestrate the RFT job lifecycle.

- •Reward function integration relies on an asynchronous invocation pattern where the Bedrock training job triggers the Lambda function via an IAM-authenticated endpoint.

- •Supports standard OpenAI-formatted JSONL datasets for training, mapping input/output pairs to the specific model's prompt template requirements.

- •Infrastructure utilizes Amazon S3 for secure dataset staging and model artifact storage, with CloudWatch integration for real-time monitoring of loss curves and reward metrics.

🔮 Future ImplicationsAI analysis grounded in cited sources

Standardization of fine-tuning APIs will accelerate multi-cloud LLM strategies.

By adopting OpenAI-compatible APIs, AWS reduces vendor lock-in, allowing enterprises to switch between model providers with minimal refactoring.

Automated reward modeling will become the standard for enterprise LLM alignment.

The shift toward Lambda-based, programmatic reward functions removes the bottleneck of human-in-the-loop feedback for specific business tasks.

⏳ Timeline

2023-09

Amazon Bedrock becomes generally available.

2024-05

Introduction of model customization (fine-tuning) for Amazon Titan models.

2025-02

AWS announces support for OpenAI-compatible APIs across Bedrock services.

2026-01

General availability of Reinforcement Fine-Tuning (RFT) capabilities on Bedrock.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗