☁️AWS Machine Learning Blog•Stalecollected in 26m

Bedrock Reasoning Checks Boost AI Compliance

💡Achieve mathematically verified gen AI compliance in Bedrock—essential for enterprises

⚡ 30-Second TL;DR

What Changed

Formal verification trumps probabilistic validation

Why It Matters

Enables regulated firms to deploy trustworthy gen AI with compliance guarantees, accelerating adoption in finance, healthcare, and beyond.

What To Do Next

Implement Automated Reasoning checks in Amazon Bedrock for your gen AI compliance testing.

Who should care:Enterprise & Security Teams

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Amazon Bedrock's formal verification utilizes automated theorem proving to mathematically guarantee that model outputs adhere to predefined safety and compliance constraints, effectively eliminating 'hallucinations' within the verified scope.

- •The integration of automated reasoning allows regulated entities to replace manual, sampling-based human audits of AI outputs with cryptographically signed, machine-verifiable compliance logs.

- •This capability specifically targets the 'black box' nature of LLMs by enforcing deterministic guardrails on top of probabilistic models, enabling deployment in high-stakes environments like financial risk assessment and medical diagnostics.

📊 Competitor Analysis▸ Show

| Feature | Amazon Bedrock (Reasoning Checks) | Microsoft Azure AI (Content Safety) | Google Cloud (Vertex AI Guardrails) |

|---|---|---|---|

| Core Methodology | Formal Verification / Theorem Proving | Probabilistic Filtering / Heuristics | Probabilistic Filtering / Heuristics |

| Compliance Auditability | Mathematically Proven | Policy-based / Log-based | Policy-based / Log-based |

| Primary Focus | Deterministic Safety | Content Moderation | Content Moderation |

🛠️ Technical Deep Dive

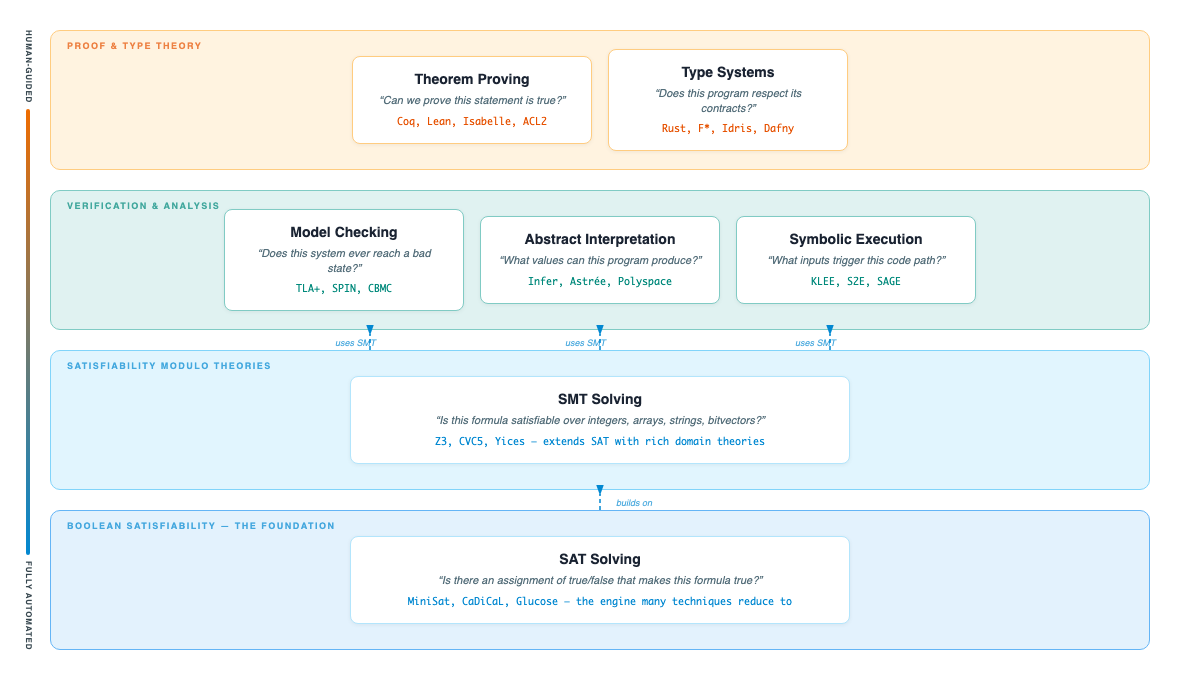

- •Utilizes a hybrid architecture combining Large Language Models with a symbolic reasoning engine (Automated Theorem Prover).

- •Implements 'Guardrail Verification' where the reasoning engine checks the model's output against a formal specification language (e.g., TLA+ or similar logic-based constraints) before the final response is rendered.

- •Supports 'Chain-of-Verification' (CoVe) patterns where the model is forced to verify its own claims against a trusted knowledge base or rule set before outputting.

- •Provides an API-level 'Reasoning Trace' that allows developers to inspect the logic path taken by the formal verifier to reach a compliant output.

🔮 Future ImplicationsAI analysis grounded in cited sources

Formal verification will become a mandatory requirement for AI procurement in the financial and healthcare sectors by 2028.

Regulators are increasingly demanding deterministic proof of safety that probabilistic testing cannot provide, forcing a shift toward formal methods.

The cost of AI compliance will decrease by 60% for enterprises adopting automated reasoning.

Automating the audit trail replaces expensive, manual human-in-the-loop compliance reviews with scalable, machine-generated verification.

⏳ Timeline

2023-04

Amazon Bedrock announced as a fully managed service for building generative AI applications.

2023-11

AWS introduces Guardrails for Amazon Bedrock to provide safety controls for generative AI applications.

2025-02

AWS expands Bedrock capabilities to include advanced reasoning models and formal verification frameworks.

2026-04

AWS formalizes 'Bedrock Reasoning Checks' as a core compliance feature for regulated industries.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗