☁️AWS Machine Learning Blog•Freshcollected in 4m

Bedrock Adds Company-Wise Memory with Neptune & Mem0

💡Persistent memory boosts Bedrock agents for enterprise chats—Neptune+Mem0 integration live

⚡ 30-Second TL;DR

What Changed

Persistent company-specific memory for Bedrock AI agents

Why It Matters

Enhances enterprise AI agents with long-term context retention, reducing need for custom memory solutions. Improves chatbot effectiveness for customer service like TrendMicro's implementation.

What To Do Next

Test company-wise memory by integrating Neptune and Mem0 in your Bedrock agent via AWS console.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The integration utilizes Mem0's 'Personalized AI' layer to manage long-term user and organizational state, which is then indexed within Amazon Neptune to allow for complex relationship mapping between entities, documents, and past user preferences.

- •This architecture addresses the 'context window' limitation by offloading historical state management to a graph-based retrieval system, significantly reducing the need for massive prompt injection in every turn.

- •The solution supports multi-tenant isolation, ensuring that company-specific memory remains siloed and compliant with enterprise data governance policies while allowing agents to perform cross-session reasoning.

📊 Competitor Analysis▸ Show

| Feature | Amazon Bedrock (w/ Neptune & Mem0) | OpenAI Assistants API (Memory) | LangChain/LangGraph Persistence |

|---|---|---|---|

| Storage Backend | Amazon Neptune (Graph) | Managed Proprietary | Flexible (SQL/Vector/Graph) |

| Customization | High (BYO Database) | Low (Managed) | Very High (Code-based) |

| Enterprise Focus | High (AWS Compliance) | Medium | Low (Developer-centric) |

🛠️ Technical Deep Dive

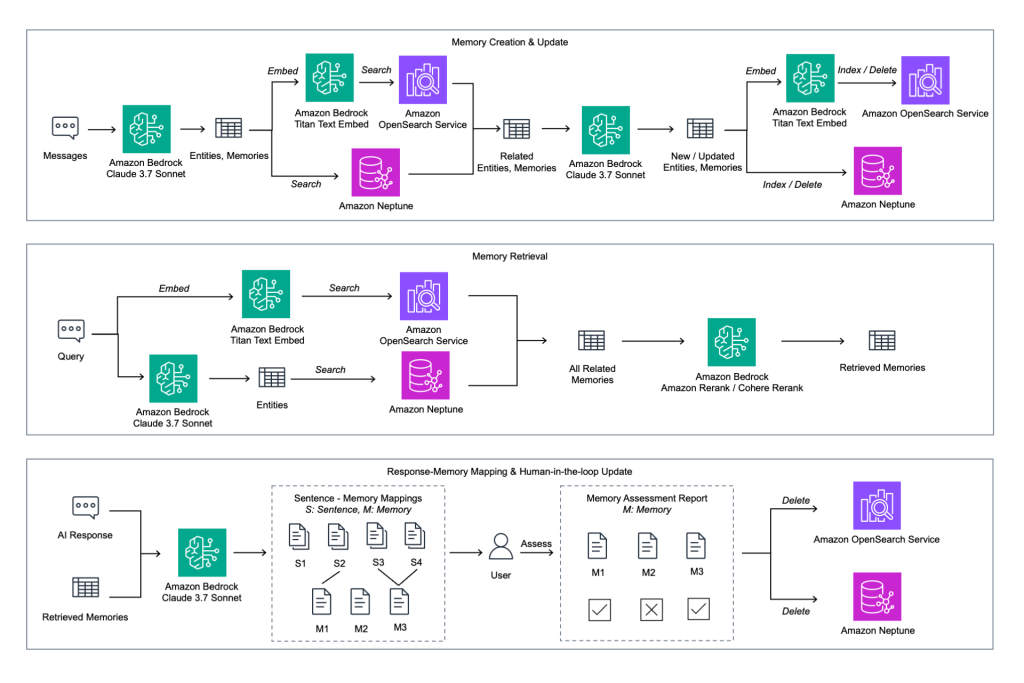

- •Mem0 acts as the orchestration layer that extracts, updates, and retrieves 'facts' from unstructured conversation history.

- •Amazon Neptune serves as the persistent knowledge graph, storing entities as nodes and their relationships as edges, enabling semantic traversal rather than simple vector similarity search.

- •The system employs a hybrid retrieval approach: vector search for semantic relevance and graph traversal for contextual relationship mapping.

- •Integration is facilitated through Bedrock's Knowledge Bases, allowing the memory layer to be treated as a dynamic data source for RAG (Retrieval-Augmented Generation) pipelines.

🔮 Future ImplicationsAI analysis grounded in cited sources

Enterprise adoption of graph-based RAG will surpass vector-only RAG by 2027.

Graph databases provide superior handling of complex, multi-hop reasoning tasks that standard vector databases struggle to resolve.

AWS will introduce automated memory pruning features for Bedrock.

As company-wise memory grows, managing storage costs and relevance will necessitate automated lifecycle policies for historical data.

⏳ Timeline

2023-09

Amazon Bedrock becomes generally available with support for multiple foundation models.

2024-05

Mem0 (formerly EmbedChain) gains traction as an open-source memory layer for LLMs.

2025-02

AWS announces deeper integration capabilities for Knowledge Bases in Bedrock.

2026-04

Amazon Bedrock launches company-wise memory integration with Neptune and Mem0.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗