AWS HyperPod Slashes Seismic Training to 5 Days

💡36x faster training for foundation models on AWS – ideal for scaling seismic AI workloads

⚡ 30-Second TL;DR

What Changed

Achieved near-linear scaling for distributed training

Why It Matters

This breakthrough enables faster development of geophysical AI models, democratizing large-scale seismic analysis for energy and research sectors. AWS practitioners can now train massive models efficiently without custom infrastructure.

What To Do Next

Test SageMaker HyperPod clusters for your large ViT model training to verify scaling efficiency.

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

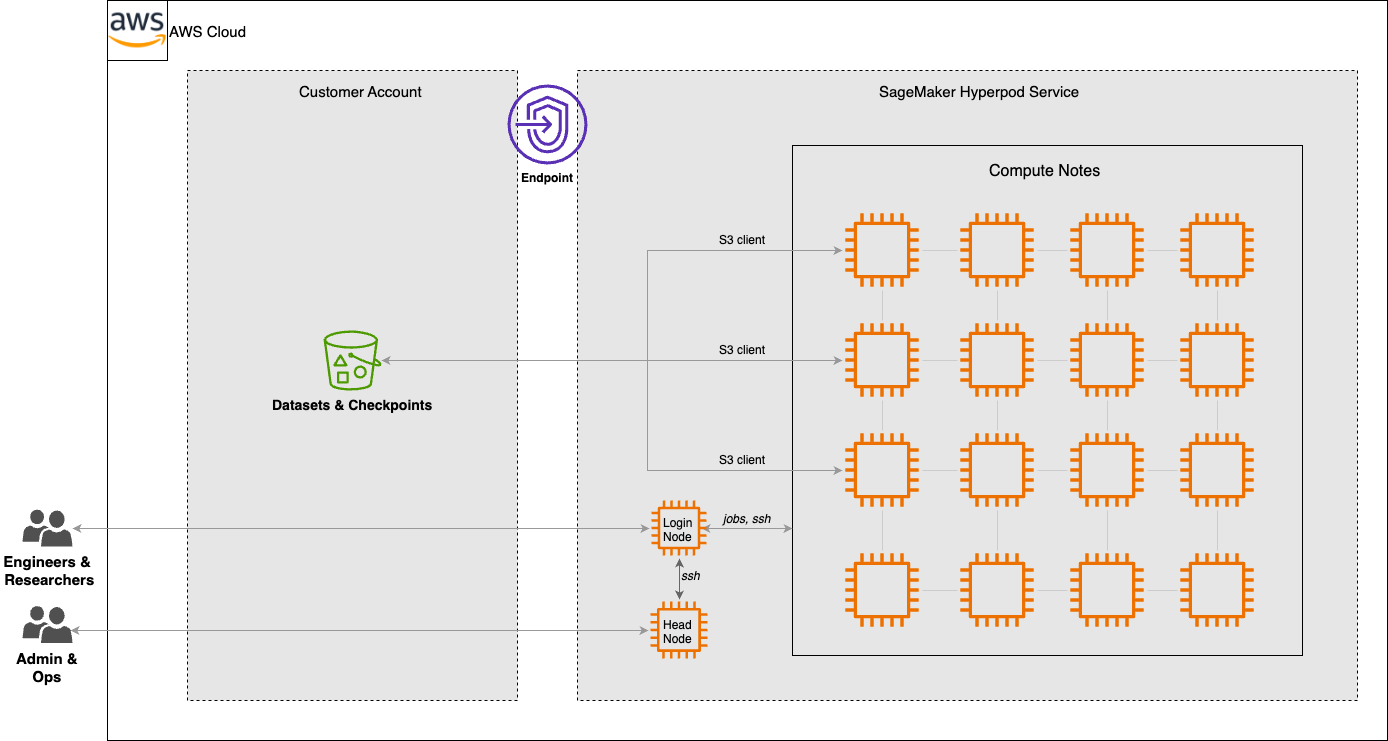

- •TGS utilized a distributed training strategy on SageMaker HyperPod that specifically optimized the orchestration of thousands of GPU instances, minimizing the overhead typically associated with checkpointing and synchronization in large-scale model training.

- •The Seismic Foundation Model (SFM) architecture leverages a masked autoencoder approach, allowing the model to learn representations from vast, unlabeled seismic datasets, which is critical for subsurface imaging where labeled data is scarce.

- •The reduction in training time was facilitated by the integration of AWS's custom-built EFA (Elastic Fabric Adapter) networking, which provides the low-latency, high-bandwidth interconnects necessary for the near-linear scaling observed during the training of the Vision Transformer.

📊 Competitor Analysis▸ Show

| Feature | AWS SageMaker HyperPod | Google Cloud Vertex AI (TPU/GPU) | Azure Machine Learning (ND-series) |

|---|---|---|---|

| Orchestration | Managed Kubernetes/Slurm-like | Managed GKE/Vertex AI | Managed Azure Kubernetes Service |

| Interconnect | EFA (up to 3200 Gbps) | Jupiter/ICI (custom) | InfiniBand (up to 3200 Gbps) |

| Scaling | Near-linear for massive clusters | Optimized for TPU pods | Optimized for InfiniBand clusters |

| Target | Large-scale LLM/Foundation models | Large-scale LLM/Foundation models | Large-scale LLM/Foundation models |

🛠️ Technical Deep Dive

- Model Architecture: Vision Transformer (ViT) adapted for 3D seismic volumes, utilizing masked autoencoder (MAE) pre-training objectives.

- Infrastructure: Deployment on Amazon EC2 P5 instances powered by NVIDIA H100 Tensor Core GPUs.

- Networking: Utilization of Elastic Fabric Adapter (EFA) with NVIDIA Collective Communications Library (NCCL) for high-performance inter-node communication.

- Data Handling: Integration with Amazon FSx for Lustre to provide high-throughput, low-latency storage for massive seismic datasets during training iterations.

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: AWS Machine Learning Blog ↗