ASI-EVOLVE Beats Humans in AI Optimization

💡AI auto-designs better models than humans—revolutionize your R&D pipeline now

⚡ 30-Second TL;DR

What Changed

Automates full AI stack optimization loop for data, architectures, algorithms

Why It Matters

ASI-EVOLVE accelerates AI innovation by automating R&D cycles, reducing manual effort, and enabling knowledge transfer across teams. It shifts AI development from siloed intuition to systematic self-improvement, potentially unlocking vast unexplored design spaces.

What To Do Next

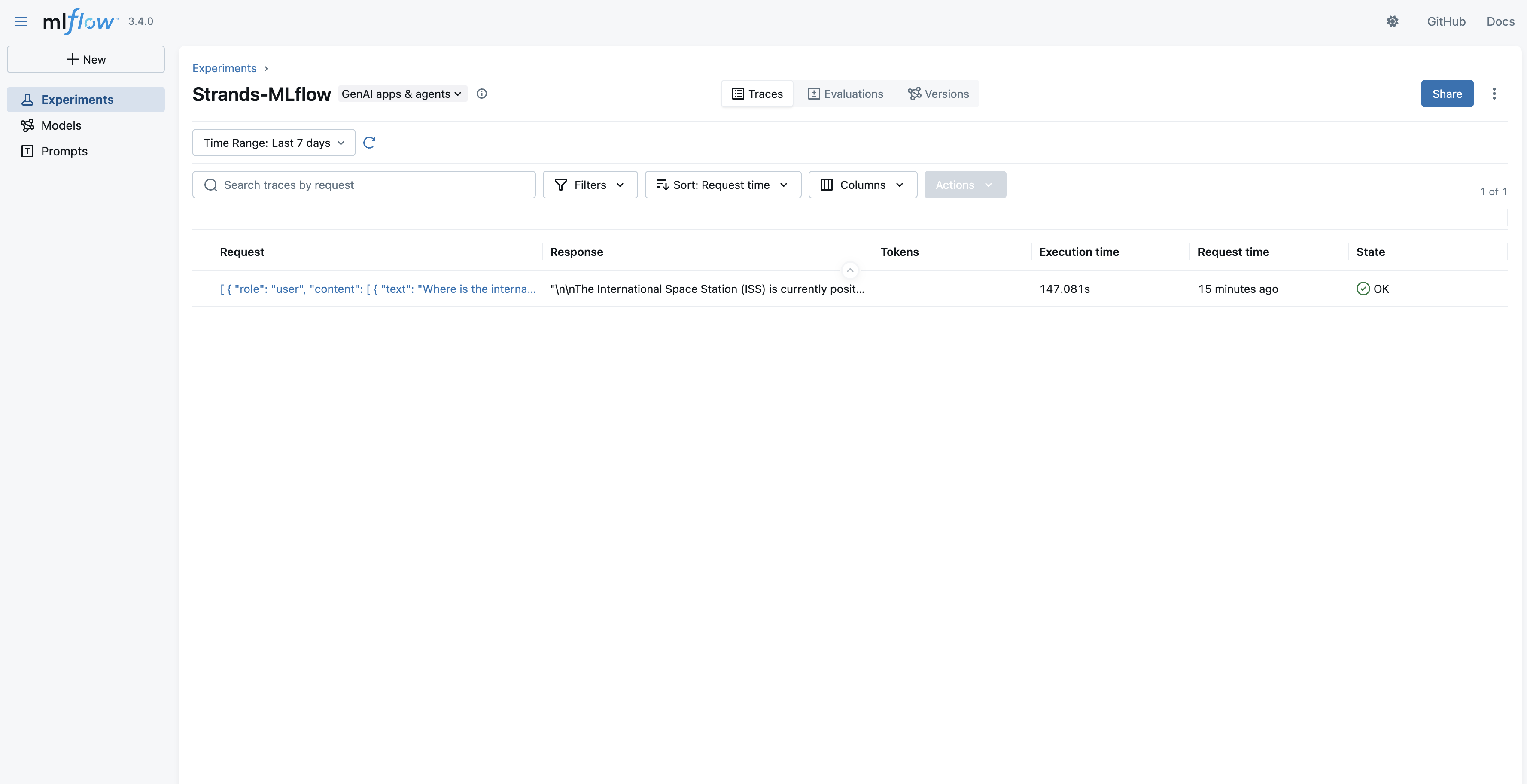

Implement a similar learn-design-experiment-analyze loop in your next AutoML pipeline using open-source agent frameworks.

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •ASI-EVOLVE utilizes a proprietary 'Meta-Evolutionary' objective function that dynamically adjusts mutation rates based on the convergence stability of the target model's loss landscape.

- •The framework integrates a specialized 'Compute-Aware Scheduler' that prioritizes experiments with high probability of Pareto-optimal outcomes, reducing total GPU-hour consumption by approximately 40% compared to brute-force NAS (Neural Architecture Search) methods.

- •SII-GAIR has open-sourced a distilled version of the ASI-EVOLVE controller, allowing researchers to apply the optimization loop to smaller, domain-specific models without requiring the massive compute clusters used in the original study.

📊 Competitor Analysis▸ Show

| Feature | ASI-EVOLVE | Google AutoML | AutoGPTQ/AutoAWQ |

|---|---|---|---|

| Optimization Scope | Full Stack (Data/Arch/Algo) | Architecture/Hyperparams | Quantization/Compression |

| Human Baseline | Outperforms SOTA | Matches/Approaches | N/A (Optimization only) |

| Compute Efficiency | High (Meta-Evolutionary) | Moderate | High |

| Pricing | Research/Enterprise Tier | Cloud-based (GCP) | Open Source |

🛠️ Technical Deep Dive

- •Architecture: Employs a hierarchical Transformer-based controller that generates computational graphs as sequences of tokens.

- •Optimization Loop: Uses a Reinforcement Learning (RL) agent with a Proximal Policy Optimization (PPO) variant to navigate the design space.

- •Feedback Mechanism: Implements a multi-fidelity evaluation strategy where candidate designs are first tested on 5% of the training data before full-scale training.

- •Data Optimization: Features an automated data-pruning module that identifies and removes low-entropy samples that contribute to catastrophic forgetting during pretraining.

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: VentureBeat ↗