📊Bloomberg Technology•Stalecollected in 3m

Anthropic-Pentagon AI Weapons Future

💡Anthropic-Pentagon link reveals AI warfare ethics tensions for devs.

⚡ 30-Second TL;DR

What Changed

Anthropic highlighted alongside Pentagon

Why It Matters

Signals rising military interest in AI, prompting developers to consider ethical deployment boundaries.

What To Do Next

Review Anthropic's Responsible Scaling Policy for military use restrictions.

Who should care:Researchers & Academics

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Anthropic has expanded its partnership with the U.S. Department of Defense by granting access to its Claude 3.5 models via Amazon Web Services (AWS) for secure, classified-adjacent environments.

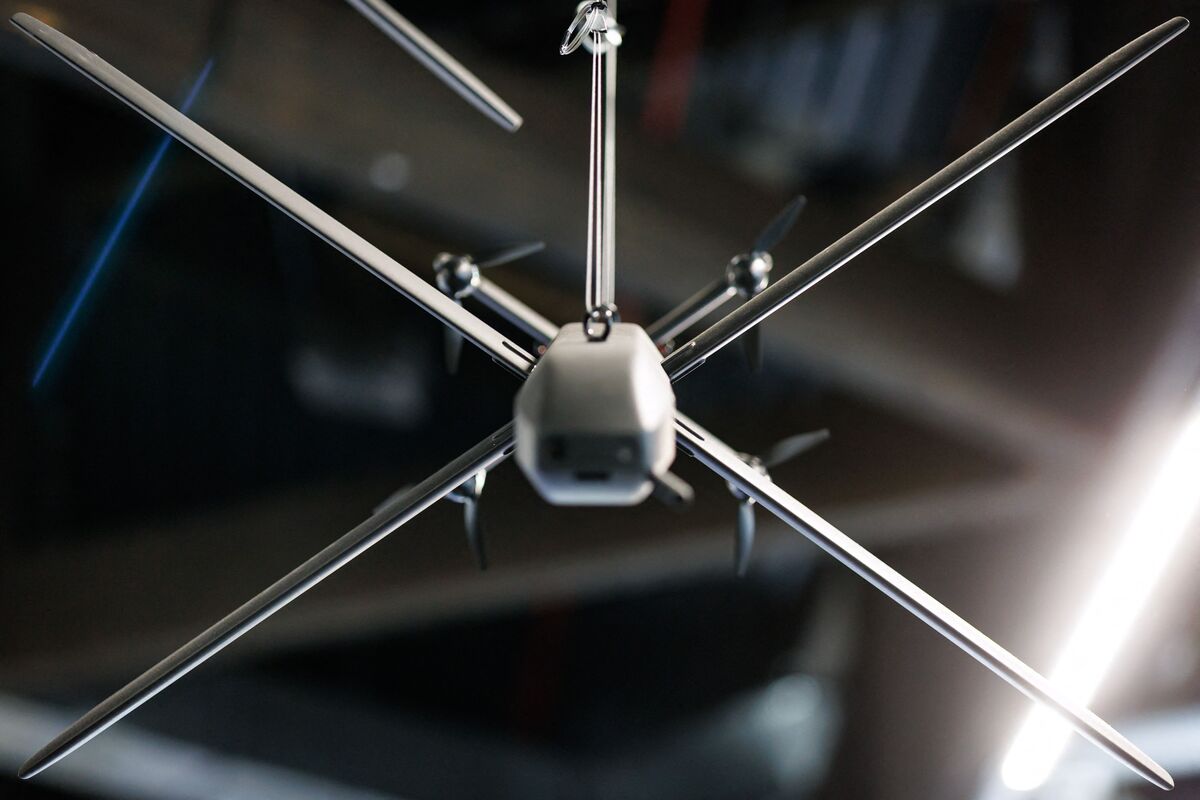

- •The collaboration focuses on 'non-kinetic' applications, such as logistics optimization, intelligence analysis, and cybersecurity, rather than direct weaponization or autonomous targeting systems.

- •Anthropic maintains a strict 'Responsible Scaling Policy' that explicitly prohibits the use of its models for the development of biological weapons or autonomous lethal weapon systems, creating friction with some defense-sector stakeholders.

📊 Competitor Analysis▸ Show

| Feature | Anthropic (Claude) | OpenAI (GPT-4o/o1) | Google (Gemini) |

|---|---|---|---|

| Defense Strategy | Focus on safety/non-kinetic | Broad DoD partnership (Microsoft) | Deep integration (Google Public Sector) |

| Deployment | AWS Bedrock (Air-gapped) | Azure Government | Google Cloud/Vertex AI |

| Safety Stance | Constitutional AI (Strict) | Alignment/RLHF | Responsible AI Principles |

🛠️ Technical Deep Dive

- •Deployment utilizes AWS Bedrock's secure infrastructure, allowing the DoD to run Claude models within VPCs (Virtual Private Clouds) to ensure data residency and compliance.

- •Models are accessed via API endpoints that support FIPS 140-2 validated encryption for data in transit and at rest.

- •The integration leverages Anthropic's 'Constitutional AI' framework, which uses a set of principles to guide model behavior, ensuring outputs remain within defined safety guardrails during sensitive analysis tasks.

- •Implementation focuses on RAG (Retrieval-Augmented Generation) architectures to allow models to query classified or proprietary datasets without retraining the base model.

🔮 Future ImplicationsAI analysis grounded in cited sources

Anthropic will face increased pressure to modify its safety guardrails for defense contracts.

As the DoD seeks more aggressive AI capabilities, Anthropic's strict refusal to support kinetic applications may lead to contract disputes or the loss of defense market share.

The Pentagon will prioritize 'hybrid' AI architectures over single-vendor solutions.

To avoid vendor lock-in and mitigate risks, the DoD is moving toward interoperable systems that can switch between Anthropic, OpenAI, and open-source models based on mission requirements.

⏳ Timeline

2023-07

Anthropic joins the U.S. AI Safety Institute Consortium to help establish standards for AI testing.

2024-03

Anthropic releases Claude 3 family, marking the first iteration with significant enterprise-grade security features.

2024-07

Anthropic announces availability of Claude 3.5 Sonnet on AWS Bedrock, facilitating easier adoption by government agencies.

2025-11

Anthropic formalizes specific guidelines for government use, emphasizing non-kinetic applications.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: Bloomberg Technology ↗