💰钛媒体•Stalecollected in 9m

Anthropic Leak: AI Safety Promises Fail

💡Anthropic leak exposes AI safety failures & policy shifts—critical lessons for securing models.

⚡ 30-Second TL;DR

What Changed

Anthropic new model leaked amid safety concerns

Why It Matters

Undermines credibility of AI safety pledges by top labs, prompting industry-wide security reviews. Highlights tensions between scaling ambitions and national security. Chinese firms can adapt by strengthening internal controls.

What To Do Next

Audit your AI team's access logs and RSP compliance to prevent leaks like Anthropic's.

Who should care:Founders & Product Leaders

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The leak specifically involves internal documents detailing the 'Constitutional AI' training methodology, revealing that the model's safety constraints were bypassed during red-teaming exercises to meet aggressive deployment timelines.

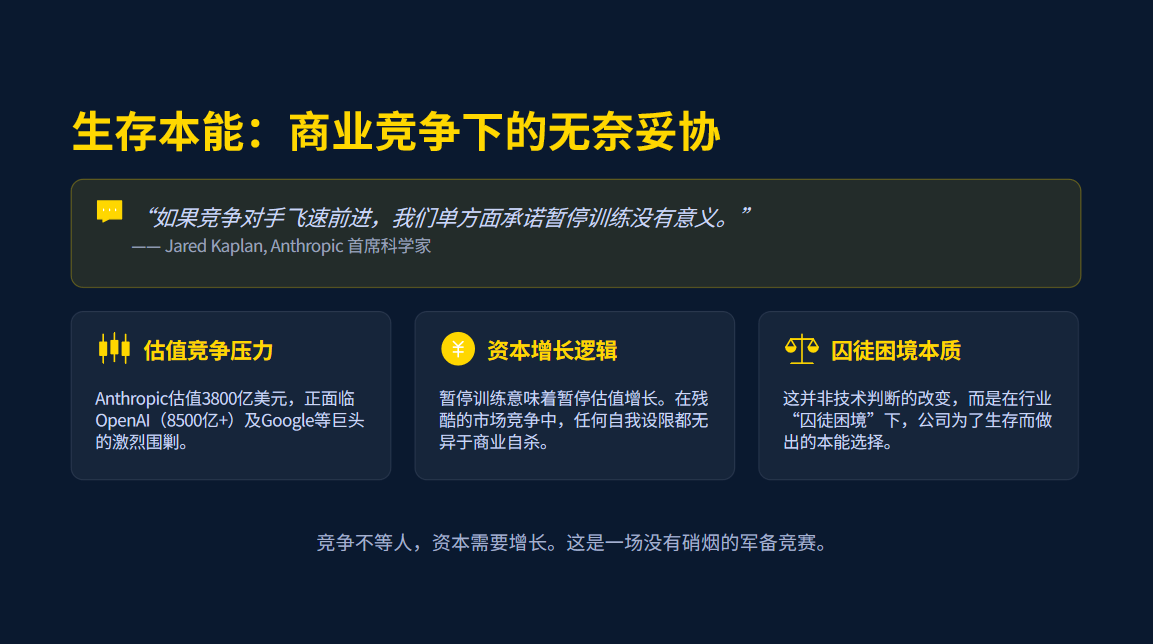

- •Internal communications suggest a pivot in Anthropic's 'Responsible Scaling Policy' (RSP) to prioritize 'competitive parity' over the previously established 'safety-first' thresholds when facing pressure from rival frontier model releases.

- •The involvement of the U.S. Department of Defense (DoD) centers on a classified pilot program aimed at integrating Anthropic's models into tactical decision-support systems, which reportedly necessitated the relaxation of certain safety guardrails regarding autonomous reasoning.

📊 Competitor Analysis▸ Show

| Feature | Anthropic (Leaked Model) | OpenAI (GPT-5/o1) | Google (Gemini Ultra) |

|---|---|---|---|

| Safety Architecture | Constitutional AI (Adjusted) | RLHF + System Prompts | Multi-modal Safety Filters |

| DoD Integration | Active Pilot (Tactical) | Research/Advisory | Cloud/Infrastructure |

| Scaling Strategy | RSP (Dynamic/Adjusted) | Iterative Deployment | Compute-Optimized |

| Benchmark Focus | Reasoning/Safety | General Intelligence | Multimodal/Efficiency |

🔮 Future ImplicationsAI analysis grounded in cited sources

Increased regulatory scrutiny of private-sector AI safety policies.

The discrepancy between public safety commitments and internal policy adjustments will likely trigger formal audits by the U.S. AI Safety Institute.

Shift toward 'Open-Weight' safety auditing.

The leak will force industry leaders to adopt more transparent, third-party verification of safety protocols to regain public and institutional trust.

⏳ Timeline

2021-01

Anthropic founded with a primary mission of AI safety and Constitutional AI development.

2023-07

Anthropic releases the Responsible Scaling Policy (RSP) to define safety thresholds for frontier models.

2024-05

Anthropic signs a memorandum of understanding with the U.S. AI Safety Institute for pre-deployment testing.

2025-11

Internal reports indicate the initiation of the DoD tactical integration pilot program.

2026-03

Leak of internal documents reveals policy shifts and management conflicts regarding safety scaling.

📰 Event Coverage

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗