💰钛媒体•Stalecollected in 9h

Alibaba Qwen: 3 Models in 4 Days

💡Alibaba drops 3 Qwen models in 4 days – benchmark for your LLM apps now

⚡ 30-Second TL;DR

What Changed

Three Qwen models launched in 4 days

Why It Matters

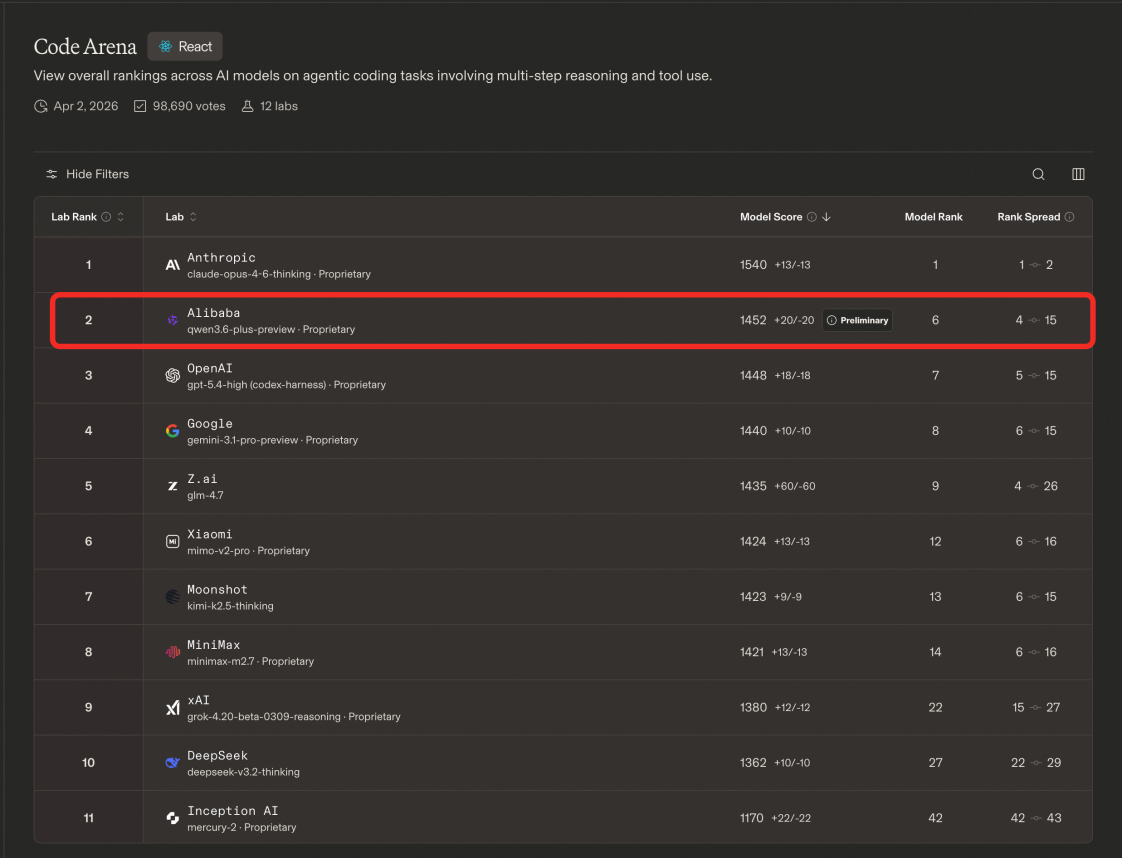

Accelerates competition in Chinese LLMs, potentially lowering costs for developers. Alibaba may expand cloud AI services aggressively.

What To Do Next

Test latest Qwen models on Alibaba Cloud for inference speed improvements.

Who should care:Developers & AI Engineers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •The rapid release cycle highlights Alibaba's 'open-weights' strategy, aiming to capture developer mindshare by providing high-performance alternatives to closed-source models like GPT-4.

- •These releases specifically target diverse deployment scenarios, ranging from edge-device optimization to high-compute enterprise reasoning tasks, reflecting a shift toward vertical-specific AI solutions.

- •The acceleration in release cadence is directly tied to Alibaba's integration of Qwen into its cloud infrastructure, aiming to lower the barrier for enterprise adoption of proprietary LLMs.

📊 Competitor Analysis▸ Show

| Feature | Qwen Series | GPT-4o (OpenAI) | Claude 3.5 (Anthropic) |

|---|---|---|---|

| Model Type | Open-Weights / Proprietary | Closed-Source | Closed-Source |

| Primary Focus | Ecosystem/Cloud Integration | General Purpose/Reasoning | Safety/Coding/Nuance |

| Benchmark (MMLU) | Competitive (Top-tier) | Industry Standard | Industry Standard |

| Deployment | Cloud/On-Prem/Edge | API/Cloud Only | API/Cloud Only |

🛠️ Technical Deep Dive

- •Architecture: Utilizes a Transformer-based decoder-only architecture with advanced Mixture-of-Experts (MoE) scaling for larger variants.

- •Context Window: Recent iterations have pushed context handling to 1M+ tokens, utilizing Ring Attention or similar long-context optimization techniques.

- •Training Data: Employs a massive, multilingual corpus with a heavy emphasis on high-quality synthetic data generation to improve reasoning capabilities.

- •Quantization: Native support for FP8 and INT4 quantization to facilitate deployment on consumer-grade hardware and edge devices.

🔮 Future ImplicationsAI analysis grounded in cited sources

Alibaba will prioritize 'Model-as-a-Service' (MaaS) revenue over direct API licensing.

The rapid release of diverse models suggests a strategy to lock developers into the Alibaba Cloud ecosystem rather than competing solely on API usage fees.

Qwen will achieve parity with top-tier US models in multimodal reasoning by Q4 2026.

The current trajectory of rapid iteration and the integration of vision-language capabilities indicate a clear path toward closing the remaining performance gap.

⏳ Timeline

2023-08

Alibaba releases Qwen-7B, its first open-source LLM.

2024-02

Launch of Qwen1.5, significantly improving performance and multilingual support.

2024-06

Introduction of Qwen2, marking a major leap in reasoning and coding capabilities.

2025-09

Alibaba unveils Qwen-Max, focusing on enterprise-grade reasoning and complex task execution.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗