🐼Pandaily•Freshcollected in 3h

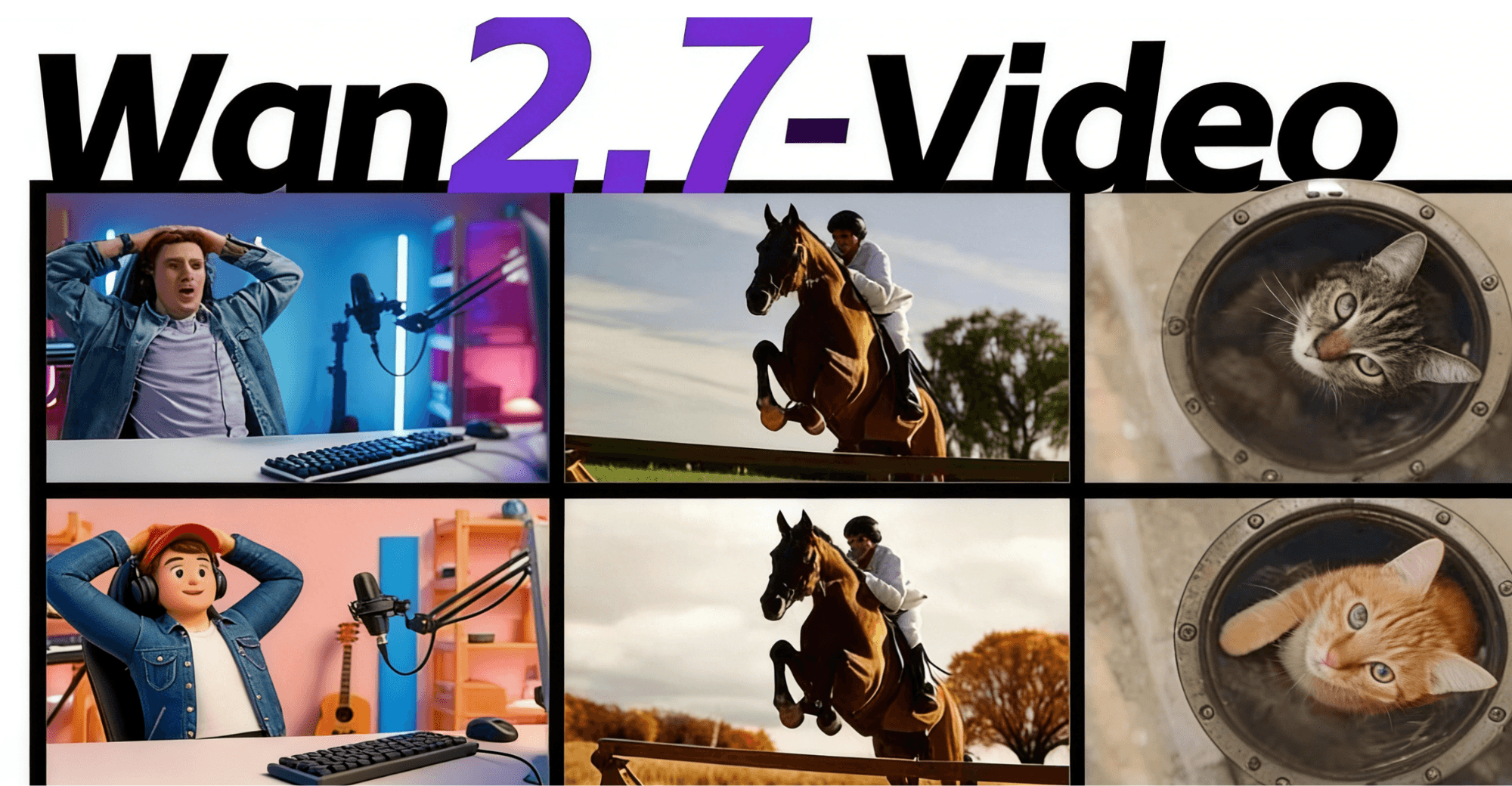

Alibaba Launches Wan2.7-Video for AI Video Workflows

💡Alibaba's text-to-full-video workflow revolutionizes creator tools.

⚡ 30-Second TL;DR

What Changed

Alibaba unveils Wan2.7-Video AI video model.

Why It Matters

Empowers creators with end-to-end AI video production from text, streamlining workflows and lowering barriers. Positions Alibaba as a leader in AI multimedia tools.

What To Do Next

Test Wan2.7-Video by generating a full video from a text script prompt.

Who should care:Creators & Designers

🧠 Deep Insight

AI-generated analysis for this event.

🔑 Enhanced Key Takeaways

- •Wan2.7-Video is built upon the foundation of Alibaba's earlier Wanx (Wan) series, specifically leveraging advancements in diffusion transformer (DiT) architectures to improve temporal consistency in long-form video generation.

- •The model integrates a proprietary 'Video-to-Workflow' engine that allows users to maintain character and style consistency across multiple shots, a significant hurdle in previous generative video iterations.

- •Alibaba has positioned Wan2.7-Video as an open-weights model for the research community, aiming to accelerate the development of specialized video production tools within the Chinese AI ecosystem.

📊 Competitor Analysis▸ Show

| Feature | Wan2.7-Video | OpenAI Sora | Runway Gen-3 Alpha |

|---|---|---|---|

| Architecture | Diffusion Transformer (DiT) | Diffusion Transformer (DiT) | Latent Diffusion |

| Workflow Integration | Native Script-to-Scene | Limited/API-based | Advanced Editor Suite |

| Accessibility | Open-weights/API | Restricted/Limited | Paid Subscription |

| Primary Focus | Creative Production Workflows | High-fidelity Simulation | Professional Post-production |

🛠️ Technical Deep Dive

- •Architecture: Utilizes a scalable Diffusion Transformer (DiT) backbone optimized for high-resolution video latent space processing.

- •Temporal Consistency: Employs a novel 3D-attention mechanism that enforces spatial-temporal coherence across frame sequences, reducing 'flicker' artifacts.

- •Control Mechanisms: Implements a text-to-control adapter layer that translates natural language prompts into camera movement parameters (pan, tilt, zoom) and object-level constraints.

- •Training Data: Trained on a massive, curated dataset of high-definition video clips paired with dense descriptive metadata to improve instruction following.

🔮 Future ImplicationsAI analysis grounded in cited sources

Alibaba will capture significant market share in the Chinese enterprise video production sector by 2027.

The integration of script-to-scene workflows directly addresses the high cost of professional video editing, providing a strong incentive for enterprise adoption.

The open-weights release of Wan2.7-Video will lead to a surge in specialized fine-tuned models for animation and advertising.

Providing open access to the base model allows developers to train on niche datasets, bypassing the limitations of general-purpose models.

⏳ Timeline

2024-09

Alibaba releases the initial Wanx (Wan) model series for image and video generation.

2025-03

Alibaba updates the Wanx model architecture to improve temporal stability and resolution.

2026-04

Alibaba launches Wan2.7-Video, introducing full creative workflow capabilities.

📰

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: Pandaily ↗