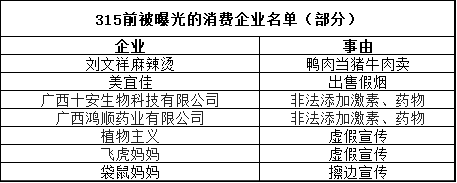

315 Exposes AI Poisoning in Consumer Firms

💡315 gala exposes real AI poisoning cases—essential security wake-up for prod AI apps

⚡ 30-Second TL;DR

What Changed

315 gala highlights food safety failures like tainted泡椒凤爪

Why It Matters

Elevates awareness of AI security risks in consumer apps, potentially spurring Chinese regulations on model robustness. AI practitioners must prioritize defenses against poisoning.

What To Do Next

Test your LLMs for poisoning with tools like Garak or PromptInject to secure consumer deployments.

🧠 Deep Insight

Web-grounded analysis with 5 cited sources.

🔑 Enhanced Key Takeaways

- •Expert Li Fumin from Shandong University’s Intelligent Governance Institute described AI model poisoning as businesses using GEO services to embed promotional content in targeted training, guiding AI to generate biased product recommendations[2].

- •Such poisoning constitutes unfair competition by fabricating facts through technological means, violating consumer rights to information and fair transactions under China's Consumer Rights Protection Law[2].

- •Recommendations include regulators enhancing AI marketing oversight, AI operators improving training data scrutiny and output filtering with traceability, and consumers raising awareness to report issues[2].

- •Fact-checking sources found no credible evidence, official confirmation, or technical forensics verifying the Gala's claims of poisoned large models or a brainwashing AI industry chain[1][3].

🔮 Future ImplicationsAI analysis grounded in cited sources

⏳ Timeline

📎 Sources (5)

Factual claims are grounded in the sources below. Forward-looking analysis is AI-generated interpretation.

Weekly AI Recap

Read this week's curated digest of top AI events →

👉Related Updates

AI-curated news aggregator. All content rights belong to original publishers.

Original source: 钛媒体 ↗